The Biggest Failures in Consumer Audio/Video Electronics History

While we all enjoy the latest technologies afforded to us in consumer electronics, early adopters often pay the price by getting behind new formats that dry up as quickly as the cash they sunk into them. Then there are those formats and inventions that tease the buying public with alluring technology and promises of introduction “very soon,” but they never seem to materialize despite the hype and publicity. Let's take a look at what we feel to be the biggest product failures in consumer A/V electronics and try to figure out what went wrong. Some of these are well-known and familiar to everyone; some of them are less well-known but their failure was no less spectacular.

8-Track Tape, 1965

To be fair and accurate, the 8-track tape player was not a commercial failure—in fact, it was incredibly popular and enjoyed widespread success until the cassette took over in the early 1970’s. It found its biggest success in automotive use—most of the major car companies offered an 8-Track stereo as a factory-installed option and many 3rd-party companies sold 8-Tracks as add-on equipment for cars. However, 8-Tracks were also popular in home music systems.

The idea for the 8-Track came about from the unsuitability of both vinyl records and open-reel tape systems to be used in cars. Consumers desired something more than just AM/FM radio—they wanted to be able to play the music of their own choosing when driving. The 8-Track actually originated from Lear Jet Corporation, under the name Lear Jet Stereo 8-Track Cartridge. It was a self-contained endless loop of tape within a plastic housing, roughly the size of a video tape. As other companies began to produce the system, it became known simply as “8-Track tape.”

Pioneer 8-Track Player

While it was an innovative (and mostly successful) first attempt to produce a viable tape-playing system for automotive and portable use, the actual system itself was terrible. The sound and frequency response was limited and decidedly “low-fi,” with a response that barely reached past 8 or 9kHz. The design also mandated that the tape had to switch tracks during playback, resulting in a 2 or 3 second delay, often right in the middle of the song. The tapes themselves were prone to jamming and usually, there was no remedy other than to throw it out and buy another.

Unreliable, bad-sounding and too large—that was the 8-Track Tape. As soon as something better came along, 8-Track disappeared so fast that it was like it never existed. But for history’s sake, it needs to be on this list.

Quadraphonic Sound, 1973

In the early days of home audio, there was no surround sound. Then in the early 1970’s, people discovered that you could increase the spaciality of the sound by wiring up an additional pair of speakers, fed by the difference signal between the front channels of a normal stereo recording. This circuit is generally credited to famed electronics designer David Hafler. Dynaco—a popular 1970’s manufacturer of high-performance but value-priced equipment—offered the “Dynaquad” circuit in its SCA-80Q integrated amplifier and also as a separate add-on, the model QD-1 Quadaptor.

In the early days of home audio, there was no surround sound. Then in the early 1970’s, people discovered that you could increase the spaciality of the sound by wiring up an additional pair of speakers, fed by the difference signal between the front channels of a normal stereo recording. This circuit is generally credited to famed electronics designer David Hafler. Dynaco—a popular 1970’s manufacturer of high-performance but value-priced equipment—offered the “Dynaquad” circuit in its SCA-80Q integrated amplifier and also as a separate add-on, the model QD-1 Quadaptor.

Real 4-channel Quadraphonic Sound came a short time later. Marketing people thought the next big thing in sound would be to put the listener “right in the middle of the orchestra,” so to speak. It would be dramatic and impactful. It would also necessitate four speakers instead of two, so that was also appealing to the manufacturers. In what was the consumer electronics industry’s first no-winner format war, there were not two, but three competing 4-channel systems. This was the first great incompatible format debacle in the consumer electronics industry, a preview of what was to come with Beta and VHS, PC and Mac, Dolby Digital and DTS, Plasma and LED, DVD-A and SACD, and on and on.

Some of these format wars have had conclusive winners and losers, like Beta and VHS, or more recently HD DVD and Blu-ray. In others, the competing formats have learned to play nice with each other and even establish some degree of compatibility, like PC and Mac computers, where they now share cross-compatibility of Microsoft Office programs and both systems can do e-mail, web surfing, etc. and communicate with each other no problem. In some instances, the less popular format has simply faded away without any real harm being done to the end user (plasma TV owners can receive and enjoy programs just as easily as owners of LED TVs can, even if plasma eventually fades off into the sunset).

Kenwood Quad 4CH Receiver

But four-channel audio was a disaster right from the start, both technically and marketing-wise.

To begin with, there were three distinct encode-decode systems: two matrixed/phase-differential systems, one called SQ and the other called QS. There was also a “discrete” system called CD-4 that was LP-based, requiring a special phono cartridge with response up to 40kHz, to correctly track the “pilot signal” in the grooves of the CD-4 record and send that signal to the decoder in the electronics.

SQ would sort of decode QS records and vice-versa, but CD-4 was totally incompatible with the other two. All three systems would fabricate some kind of phony multi-channel sound when playing a conventional 2-channel LP or cassette tape.

None of the three systems were particularly great-sounding, but far and away the biggest impediment to marketplace acceptance was that the record companies didn’t have anything close to a clear idea as to which of the three systems—if any—they should back. Sound familiar (remember DVD-A and SACD)? With the major recording labels taking a wait and see who the market winner will be before we commit to recording in that format attitude, there was no significant amount of popular software available for sale in the record stores. With no clear four-channel software winner in the stores (everything remained two-channel stereo), the electronics manufacturers were reluctant to commit to putting just a single format into their products. Therefore, receivers had to have all three decoding systems on board, making them very expensive.

It was a perfect storm of bad conditions for the electronics manufacturers and the record stores. Not one of the three competing formats could establish a marketplace “win,” so the entire Quadraphonic house of cards simply collapsed in a messy, unsalvageable heap. This debacle really left a bad taste in the consumer’s mouth for quite a while and every “format war” since has been viewed with a suspicious “Oh no, here we go again” attitude by the market.

Betamax, 1975

Video recording is so ubiquitous and commonplace these days that we often forget how technologically difficult it was in the 1970’s. Sony was actually the first company to successfully market a consumer video recording system. That system was called Betamax, and for its day, it was an extremely advanced analog recording system, able to capture the ultra-high (THz, trillion-Hertz) visible color frequencies. In its initial form, recording time was limited to one hour, but this was expanded to five hours in the latest versions.

Sony Betamax Player

Matsushita (Panasonic) and Victor Corporation (JVC) teamed up on a competing video tape recording format called VHS (Video Home System, although we’d bet no one today remembers that). It operated on the same essential principles as Sony’s Betamax, but the details were different, both electrically and mechanically, and the two systems were wholly incompatible. The Betamax system was actually somewhat superior in terms of video resolution and mechanical simplicity, but VHS had one huge marketplace advantage: it had a recording time of two hours compared to BetaMax’s one. As the manufacturers introduced additional (slower) recording speeds to their units (sacrificing video quality for longer recording time), the final record-time contest results were Betamax 5 hours, VHS, 6 hours. It was a marketplace advantage that VHS rode to a final, conclusive knockout victory. Matsushita ended up licensing their system to all the big names of the time (RCA, Sylvania. Magnavox, Sears, etc.) and Sony’s Betamax never stood a chance. Sony eventually swallowed their stubborn corporate pride and introduced their own line of VHS recorders in the mid-80’s. So strong was the Sony name that they rocketed to the No. 1 sales position in VHS within a year, zooming past Panasonic. But who knows how many millions of dollars they forfeited by sticking with their losing Betamax system from 1976-1986.

Considering the immense importance of consumer video recording and incredible cultural significance of rented videotape movies during the 80’s and 90’s, Sony’s Betamax failure could be considered one of the biggest consumer electronics flops of all time.

Elcaset, 1977

In the late 1970’s, major audio equipment manufacturers like Sony, Panasonic and TEAC recognized that the existing compact cassette format had serious technical limitations. With a tape that was only 1/8” wide, running at the incredibly slow speed of 1 7/8 IPS (inches per second), tape saturation and distortion were inevitable. Clever engineering and Dolby Noise Reduction had given this originally dictation-only low-fi medium fairly acceptable high-fidelity performance, but it was just barely “good enough” by any objective audiophile measure.

In the late 1970’s, major audio equipment manufacturers like Sony, Panasonic and TEAC recognized that the existing compact cassette format had serious technical limitations. With a tape that was only 1/8” wide, running at the incredibly slow speed of 1 7/8 IPS (inches per second), tape saturation and distortion were inevitable. Clever engineering and Dolby Noise Reduction had given this originally dictation-only low-fi medium fairly acceptable high-fidelity performance, but it was just barely “good enough” by any objective audiophile measure.

Reel-to-reel tape recorders ran at faster speeds (3 ¾, 7 ½ and 15 IPS) and exhibited far superior performance. In fact, a good open-reel tape recorder was the best playback system of the day. But they were hideously inconvenient and troublesome to use, with their manual “feeding” of the tape from one reel onto the other.

What if you could combine reel-to-reel performance with cassette convenience and ease of handling? Wouldn’t that be great? That was the thought and rationale behind the Elcaset (“El’ = ‘Large’ cassette, about the size of a VHS video tape, running at 3 ¾ IPS). It made perfect sense and the units performed exactly as intended. But they never caught on. A few Sony’s made it to retail shelves in the fall of 1977, but they came and went very quickly. Beautiful units, well-made, terrific performers. But no one wanted them. A shame, really.

LaserDisc, 1978-1997

This was a 12-inch play-only format, a kind of “video LP,” if you will. The format achieved modest success and was superior to VHS tape in terms of image quality and near-CD in terms of audio quality. When you went to the trade shows or in most retail stores when the presenter wanted the best video and audio quality, they used Laserdisc. Laserdisc was also the first consumer source media to offer discrete 5.1 audio (later models).

However, the players themselves were clunky and noisy and the whole format—both the players and the discs themselves—were expensive. People weren’t favorably disposed towards buying and building an expensive collection of play-only “video LPs.”

Pioneer LD-S2 Laserdisc Player

Although technically superior to VHS in terms of image quality, the discs were heavy and easily damaged, and the players were noisy compared to VCRs. The lack of recording capability and the high costs of discs put the nail in the coffin of this technology. Once the CD was introduced, later versions of the LaserDisc were able to play both the 12-inch videodisc and the audio CD. However, the DVD was right around the corner (1996) and that put a merciful end to the LaserDisc’s surprisingly long but never overly successful market run.

This was not the first video disc player, however. In 1981, to great fanfare, RCA introduced its CED (Capacitance Electronic Disc) VideoDisc system. The discs themselves were 12-inch grooved discs, played, amazingly enough, with a diamond stylus. RCA and a few other companies managed to sell about 750,000 units before they abandoned the format in 1984. Although the players were about the same price as a VCR, the movie discs were incredibly expensive—as much as $35 ea., and that’s in 1981 dollars! And as with the LaserDisc, this was a play-only format. And it was an even bigger flop than LaserDisc.

The Finial Laser Turntable, 1986

The audiophile’s dream: A turntable that played those rich, organic, luscious-sounding analog vinyl LPs with perfect accuracy, extracting every last wonderfully lifelike bit of music from its grooves. Analog LPs—warm, beautiful, seductive. None of that harsh, cold, analytical, grating, artificial CD digital sound. No, we’re going to have perfect analog LP sound….but, this time, it’ll be without record wear, skipping, mistracking, finicky cartridge alignment issues, tonearm balancing, fragile replacement styli, or any of the other complications and aggravations of stylus-based record playback.

Laser Turntable

Sure, the CD had just been introduced a few years prior and everyone seemed to like it, but real audiophiles knew better. The analog LP had superior sound at least compared to first generation CD players and digital recording methods at that time. The common misconception was that you just can’t digitize music, break it up into 1000’s of bits, then try to re-assemble it and expect that it will still sound like real music. It took years to overcome this perception about digital music and some audiophiles believe this today, hence partly why vinyl records still exist.

What if you could keep all your prized LPs, and they’d never age any further? No more wear. And they’d sound better than ever. Sure, you might still buy a CD player and you might even use it for casual listening, but when the time came for serious listening, it was LPs for you. Nothing else.

This was the promise and attraction of the Finial Laser Turntable. Audiophiles wanted it in the worst way and it would have found a small but substantial buying market, willing to pay $2000-3000 for the unit. It could have lived quite nicely alongside the then-new CD format and it would have legitimized the millions of LPs lining the shelves in audiophile living rooms everywhere.

Unfortunately, it proved harder to perfect the technology than originally anticipated and by then, the economy had taken a downward turn right at the time Finial was looking to bring it to market. Suppliers raised their costs. Venture capital investors got cold feet. The potential market size shrank. So, the Final Laser Turntable never actually made it to market. There were a few real, functioning hand-built prototypes, however, so the fantasy of “perfect, wear-free analog LP playback” lives on in the imaginations of a few rabid audiophiles whose memories stretch back 30 years.

DAT, 1987

When Digital Audio Tape was introduced by Sony, these little cassettes were able to record digitally at CD quality and were meant to replace the aging analog cassette tape. They were superior to cassettes in every way and more durable and portable than CD, but they weren't inexpensive. So why did Sony fail yet again? The music industry’s fear of piracy and the costs of selling albums recorded on DAT restricted its usage mostly to studios in the professional sphere. Years later much less expensive means of achieving digital storage took over (such as MP3, WAV, etc.), which, ironically, were much easier to pirate.

When Digital Audio Tape was introduced by Sony, these little cassettes were able to record digitally at CD quality and were meant to replace the aging analog cassette tape. They were superior to cassettes in every way and more durable and portable than CD, but they weren't inexpensive. So why did Sony fail yet again? The music industry’s fear of piracy and the costs of selling albums recorded on DAT restricted its usage mostly to studios in the professional sphere. Years later much less expensive means of achieving digital storage took over (such as MP3, WAV, etc.), which, ironically, were much easier to pirate.

Panasonic/Phillips Digital Compact Cassette(DCC), 1992

Eight years after the CD had taken the US audio market by storm, there was a faction of audio suppliers that were convinced that the CD’s inability to record had left open a huge, unfilled niche in the market. But the CD had educated the consumer to the pluses of digital audio performance, so any new recording technology would have to be digitally-based and share that medium’s sonic advantages.

Eight years after the CD had taken the US audio market by storm, there was a faction of audio suppliers that were convinced that the CD’s inability to record had left open a huge, unfilled niche in the market. But the CD had educated the consumer to the pluses of digital audio performance, so any new recording technology would have to be digitally-based and share that medium’s sonic advantages.

Panasonic/Phillips thought they had the answer: The Digital Compact Cassette (DCC). Here was a format with the same size and operational familiarity as the analog cassette, but this new system was digital, with all the advantages that afforded in terms of frequency response, s/n and dynamic range. Compared to the lowly analog cassette—a format that had been around in ‘hi-fi’ form since 1970, over 22 years!—the DCC promised the same operational simplicity and portability as the analog cassette, but with the sonics of the CD. Plus…Digital Compact Cassette machines could play back the old analog cassette, so users could jump right to the DCC format without rendering their existing cassette collections worthless.

A “can’t miss,” right?

In spite of the seemingly unarguable logic behind the DCC, the buying public yawned. By the end of 1996, the manufacturers had thrown in the towel. The lowly, noisy, restricted frequency-range analog cassette persists to this day, like the cockroach that will survive a nuclear holocaust, yet the DCC—which offered incomparably superior sound quality, recording capability and backwards compatibility with the untold millions of analog cassettes still in use—failed to catch on. This is a format that probably deserved a better fate.

Sony MiniDisc, 1992

At the same time that Phillips/Panasonic were attempting to leverage the existing analog cassette market into their new backwards-compatible DCC format, Sony went their own way and introduced a small recordable digital disc called MiniDisc. This new format was totally different from the Panasonic DCC and seemed to set up in the customer’s mind another “format war,” similar to the BetaMax vs.VHS conflict a generation earlier. This time, however, there was no real winner. Sony’s over-the-top introductory advertising notwithstanding (the first ads showed a young, sloppily-dressed music enthusiast jumping up and exclaiming, “I can record on a disc! I can record on a disc!” , the MiniDisc failed to catch on to any major extent in America or Europe, and achieved only modest success in its home country of Japan.

At the same time that Phillips/Panasonic were attempting to leverage the existing analog cassette market into their new backwards-compatible DCC format, Sony went their own way and introduced a small recordable digital disc called MiniDisc. This new format was totally different from the Panasonic DCC and seemed to set up in the customer’s mind another “format war,” similar to the BetaMax vs.VHS conflict a generation earlier. This time, however, there was no real winner. Sony’s over-the-top introductory advertising notwithstanding (the first ads showed a young, sloppily-dressed music enthusiast jumping up and exclaiming, “I can record on a disc! I can record on a disc!” , the MiniDisc failed to catch on to any major extent in America or Europe, and achieved only modest success in its home country of Japan.

Unlike DCC, however, which really disappeared completely in a hurry, MiniDisc soldiered on in limited numbers until the early 2010’s before dying a deservedly unnoticed death.

DIVX, 1998

Piloted by electronics retailer Circuit City, the idea was you rent a disc, watch it for 2 days, and throw it away. The problem was that it wasn't compatible with regular DVD players, thus requiring consumers to purchase a DIVX-capable player— a tough sale in a marketplace flooded with satisfied DVD player owners. By the time Netflix and Blockbuster came along making DVD rentals simple, DIVX was dust in the wind just like the retailer that birthed it.

DIVX DVD Player

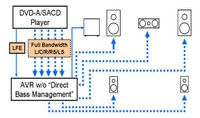

DVD-A and SACD, 1999

Once again we saw a format war, this time between DVD-A and Sony's SACD. Both formats offered higher resolution than standard CD and more importantly they did so in full 5.1 discrete multi-channel. Could multi-channel music finally succeed in a digital format where it failed in analog via Quad years ago? Technically, it should have but copyright paranoia squashed any chances for mainstream adaption. As a result, consumers were left with two new formats that had no bass management, and you had to rely on a kludgy connection method via 6 analog cables between the source device and preamp. It wasn't until years later that these formats would pass via a digital connection (first Firewire, then HDMI) but even after all of that was sorted out, software was scarce and expensive, restricting it to a very niche market.

Once again we saw a format war, this time between DVD-A and Sony's SACD. Both formats offered higher resolution than standard CD and more importantly they did so in full 5.1 discrete multi-channel. Could multi-channel music finally succeed in a digital format where it failed in analog via Quad years ago? Technically, it should have but copyright paranoia squashed any chances for mainstream adaption. As a result, consumers were left with two new formats that had no bass management, and you had to rely on a kludgy connection method via 6 analog cables between the source device and preamp. It wasn't until years later that these formats would pass via a digital connection (first Firewire, then HDMI) but even after all of that was sorted out, software was scarce and expensive, restricting it to a very niche market.

Not too long after, Blu-ray took over and offered the same multi-channel high resolution benefits of both formats but this time, with a distinct advantage: HD video. Consumers can now listen in high fidelity and see their favorite artists live in HD, and even more importantly, watch movies in HD with lossless uncompressed audio.

3D TV, 2000

For years the motion picture industry dabbled with 3D in the cinema first with those gawking red/blue anaglyph glasses producing a 3D image through anaglyph color filtering, and then later with polarized lenses that allowed your brain to perceive two separate images on the screen as one. More advanced systems employed active glasses that utilized LCD screen technology to darken each lens alternately so that the brighter and darker images were seen through alternating eyes. Although this was an improvement, it still didn't compensate for the fact that being forced to wear glasses is a game-killer, especially for people already wearing prescription lenses. 3D still caused eye strain among some viewers and loss of brightness and image detail especially at some viewing angles. Consumers at home simply didn't want to deal with those pesky glasses, and the content wasn't abundantly available. The ultimate nail in the coffin was when the display manufacturers dropped 3D support in favor of 4K UHD, relegating 3D primarily to the cinema, where it belongs. LG and Sony both ended production of 3D TV as of 2017. RIP.

For years the motion picture industry dabbled with 3D in the cinema first with those gawking red/blue anaglyph glasses producing a 3D image through anaglyph color filtering, and then later with polarized lenses that allowed your brain to perceive two separate images on the screen as one. More advanced systems employed active glasses that utilized LCD screen technology to darken each lens alternately so that the brighter and darker images were seen through alternating eyes. Although this was an improvement, it still didn't compensate for the fact that being forced to wear glasses is a game-killer, especially for people already wearing prescription lenses. 3D still caused eye strain among some viewers and loss of brightness and image detail especially at some viewing angles. Consumers at home simply didn't want to deal with those pesky glasses, and the content wasn't abundantly available. The ultimate nail in the coffin was when the display manufacturers dropped 3D support in favor of 4K UHD, relegating 3D primarily to the cinema, where it belongs. LG and Sony both ended production of 3D TV as of 2017. RIP.

HD DVD, 2007

![]() Decades after Sony lost the battle of analog video tape to Panasonic/JVC, they were at it again, this time competing against Toshiba. Before Toshiba could get their feet planted with their new HD-DVD format, Sony threw so much marketing money into Blu-ray that they virtually bought a market win. This included arming their PS3 gaming system with Blu-ray technology at virtually no cost penalty to the consumer. Compare that to the XBOX 360, which only spun DVDs, unless the consumer added the costly HD-DVD module—which almost nobody did. Analysts believe that when Sony got Warner Brothers to adopt Blu-ray exclusively, it won the battle against HD DVD. We saw this first-hand at the Toshiba booth during the CES 2008 show. Their booth was all but dead with the exception of the vultures circling to reap the rewards of road kill.

Decades after Sony lost the battle of analog video tape to Panasonic/JVC, they were at it again, this time competing against Toshiba. Before Toshiba could get their feet planted with their new HD-DVD format, Sony threw so much marketing money into Blu-ray that they virtually bought a market win. This included arming their PS3 gaming system with Blu-ray technology at virtually no cost penalty to the consumer. Compare that to the XBOX 360, which only spun DVDs, unless the consumer added the costly HD-DVD module—which almost nobody did. Analysts believe that when Sony got Warner Brothers to adopt Blu-ray exclusively, it won the battle against HD DVD. We saw this first-hand at the Toshiba booth during the CES 2008 show. Their booth was all but dead with the exception of the vultures circling to reap the rewards of road kill.

H-PAS (Hybrid Pressure Acceleration System), 2010

Timing is everything in life, isn’t it? Applying for a job? Looking to make the most of a social situation? Investing in the market? Everything depends on timing and many times, it’s beyond your control.

Timing is everything in life, isn’t it? Applying for a job? Looking to make the most of a social situation? Investing in the market? Everything depends on timing and many times, it’s beyond your control.

Let’s look at home loudspeakers. From the beginning of stereo (in 1958) right through the time when Home Theater began to dominate (early 1990’s), speakers were displayed, demonstrated and sold at retail in pretty much the exact same way: These was a ‘soundroom’ in the store with a wall of speakers. The salesperson would switch instantaneously between 2 pairs (the ‘A-B’ comparison) and the customer would decide which ones he liked better.

Almost all speakers in this time period were full-range speakers, whether they were bookshelf speakers or floorstanding speakers. Manufacturers were always looking for new and better ways to squeeze more bass out of their designs, without making them bigger and without making them less efficient (needing more power to play at a given loudness level). Ported, sealed, Aperiodic, Transmission Line, whatever—they all had pluses and minuses and they all entailed tradeoffs.

H-PAS was a truly innovative bass-loading system developed around the year 2000 by engineer Phil Clements of the small speaker company Clements Loudspeakers. He partnered up with Atlantic Technology a few years later, who refined the system and brought it to market in several highly-reviewed models in the 2010-2015 timeframe. H-PAS delivered deeper bass in a smaller enclosure without any sacrifice in efficiency—exactly the kind of advantages the market would have eaten up in 1970. In 2010, the marketplace shrugged with bored indifference. Great stereo speaker sound is simply not the driver of consumer excitement today, not in the age of social media, ear buds, streaming audio and electric cars.

Perhaps H-PAS would have been better used bringing its surprising bass to smartphones, tablets and thin LCD TVs, areas where the old limitations of size, bass extension and efficiency still apply. Or, perhaps today’s consumers are far more concerned about speed and convenience, and they just don’t care that much about sound quality.

Of course today, it's so easy to get good compact powered subwoofers on the cheap that can be tucked away in a corner to easily extend the bass range of more anemic bookshelf speakers nullifying the H-PAS advantage. This is especially true in a 5.1 system environment where subwoofers are a requirement. 40 years ago, H-PAS would’ve revolutionized the stereo speaker industry. Today, it’s an asterisk. Timing is everything.

Curved HDTV, 2014

After years of pushing thinner and flatter TV's, manufacturers decided curved was the new thin. The claim is they provide a more immersive viewing experience making you feel like you're watching a TV on a screen larger than it is. The reality is the curved shape actually makes it harder for LED backlights to spread the light evenly across the panel, which could affect brightness uniformity. And, for those sitting off axis from the TV, the curve introduces pronounced geometric distortions.

After years of pushing thinner and flatter TV's, manufacturers decided curved was the new thin. The claim is they provide a more immersive viewing experience making you feel like you're watching a TV on a screen larger than it is. The reality is the curved shape actually makes it harder for LED backlights to spread the light evenly across the panel, which could affect brightness uniformity. And, for those sitting off axis from the TV, the curve introduces pronounced geometric distortions.

Curving a panel would only make sense if the actual picture was wider than the peripheral vision of the viewer like in a large cinema. But on 65" and smaller displays, that's usually not the case since the viewing distance is typically too far away. Add to the fact that the manufacturing process is more costly making curved displays more expensive to the end user with no real benefits, it's no wonder we are seeing less and less of these displays on the retail floor these days.

Atmos-Enabled Speakers, 2015

5.1 has been the de-facto standard of surround sound since the days of Dolby Pro Logic. Dolby and DTS were looking for ways to push past the 5.1 barrier and they both achieved moderate success with 7.1, which added discrete back channels.

What was next? Height—the vertical dimension to sound. Dolby was the first on the market to release Atmos—an object-based decoding scheme that maps the sound’s path by object, rather than just directing it to fixed channels. Based on the failure of adoption of front/back height channels via Dolby Pro Logic IIz, Dolby knew it would be a tough sale to get consumers to go beyond 5.1 and 7.1 speaker schemes. So they devised a way of adding height without adding more speakers in discrete locations on the wall or ceiling. Hence the Dolby Atmos-enabled speaker was born, or as we refer to them, "bouncy house" speakers.

KEF Atmos-Enabled Speaker

In our opinion, where Dolby went wrong with these was with their aggressive marketing of them as the "preferred" solution over discrete speakers and some members of the press that were in their pocket shamelessly regurgitating their marketing nonsense as "the greatest breakthrough in 20 years". Dolby should have put their best foot forward with this technology emphasizing the importance of using discrete overhead speakers like how it's done in the cinema.

Dolby also charged a licensing fee to manufacturers producing these speakers with the stamp "Dolby" on them and many consumers saw this as nothing more than a money grab. Add to the fact that the technology had limitations: it required end users to replace their wall-mounted dipole surround speakers with ear-level bookshelf monopoles and then slap a pricey (usually $500/pr. or higher) Atmos-enabled speaker on top, made it a tough pill to swallow for consumers. As a result, only a handful of manufacturers are still producing these speakers and more than 90% of home theater installs remain 5.1 and 7.1 speaker configurations.

Dolby’s Atmos is ironclad proof, once again, that a marginal new technology (namely their Atmos-enabled speaker) that doesn’t deliver an undeniably compelling, tangible step-up in performance over an existing system, and is one that also renders currently-owned equipment obsolete or unusable is doomed to failure. Justifiable, understandable, well-deserved failure.

Bonus: Tweaks

Anything from magic markers to allegedly reduce static during playback of your CD's, to cable elevators that elevate your cables of the floor to again reduce "static electricity", these devices are not only nonsensical but they just never really caught on with most audiophiles. To this day, they remain a very small niche supported only by the most "suggestible" audiophiles desperately trying to improve system performance while ignoring the obvious things that work such as speaker upgrades, better speaker placement, and fixing room acoustics problems.

Anything from magic markers to allegedly reduce static during playback of your CD's, to cable elevators that elevate your cables of the floor to again reduce "static electricity", these devices are not only nonsensical but they just never really caught on with most audiophiles. To this day, they remain a very small niche supported only by the most "suggestible" audiophiles desperately trying to improve system performance while ignoring the obvious things that work such as speaker upgrades, better speaker placement, and fixing room acoustics problems.

The ever-popular Shun Mook Mpingo Disc—the ultimate snake oil tweak.

Conclusion

Well that's our list of failed consumer products and technologies. It's important to note that without some of these failures, we wouldn't have many of the great technologies we are enjoying today. The failure of laserdisc eventually lead to the success of CD followed by DVD. DVD-A failure lead to a very similar lossless compression algorithm used in multi-channel Blu-ray today. It's hard to know what failures today will lead to tomorrow’s success stories, but we will keep observing and appreciating the pioneering manufacturers and support from early adopters taking the risk in order to pursue the next technological breakthrough. Every step brings us closer to the real thing.

Well that's our list of failed consumer products and technologies. It's important to note that without some of these failures, we wouldn't have many of the great technologies we are enjoying today. The failure of laserdisc eventually lead to the success of CD followed by DVD. DVD-A failure lead to a very similar lossless compression algorithm used in multi-channel Blu-ray today. It's hard to know what failures today will lead to tomorrow’s success stories, but we will keep observing and appreciating the pioneering manufacturers and support from early adopters taking the risk in order to pursue the next technological breakthrough. Every step brings us closer to the real thing.

Please comment in the related forum thread below and let us know if you think any other products should have been included on this list.