The Most Memorable Audio Receivers of the Last 50 Years

This article will have a slightly different angle than the recent article, “The 10 Most Influential Speakers of the Last 50 Years.” Instead, we cover the most “memorable” receivers, not necessarily the most influential. Neither article is about “the best,” but we’re sure that won’t stop the flood of how-could-you-this-leave-off comments.

Actually, those comments are the very reason we do these articles, so we’d love to hear them. Round-up/historical articles like these are a heckuva lot of fun to write, and it’s even more fun to hear the reactions and read the comments. If the response to the Speakers article was any indication, this one should spark some lively debate of its own.

We cover everything from vintage two-channel to the more recent multi-channel surround AV receivers. We discuss the evolution from tubes to transistors, power ratings and the FTC, social, economic, and demographic changes that have occurred in America since the 1960’s and how this has impacted the receiver market.

So, in rough chronological order from oldest to newest, here are our picks for the 10 (or so) most memorable receivers of the last 50 years:

Two-Channel Simplicity: The Good Old Days

Fisher 500-T

The Fisher 500-T receiver from around 1965-ish was a hugely important unit in the evolution of American hi-fi. Fisher and H.H. Scott were the two most prominent U.S. manufacturers of mainstream electronics and the 500-T was Fisher’s first all-transistor unit. Early transistor models used germanium transistors, before superior silicon parts were available. Germanium transistors had narrower bandwidth, less gain and were not particularly reliable, giving the first transistor units a somewhat shaky reputation for inferior sound and questionable quality.

Of particular interest is this ad’s headline, boasting that it’s a “90-Watt” receiver. 90 watts how? Per channel? 45 per channel, so 90 watts total? At what level of distortion? Over what bandwidth? Both channels driven simultaneously or only a single channel driven?

This is how it was done in those days: Let the buyer beware!

Way back in the ’60 s and early ’70 s, before the FTC stepped in (1974) and made all the stereo manufacturers clean up their deceptive advertised wattage ratings, companies would use all kinds of ratings.

There was Continuous or RMS, but since this was the smallest, least-impressive number, it was always listed last, in small print, if listed at all.

Double the RMS was “Dynamic” or “Peak” or “Music” power—the rationale being that an amplifier could likely deliver about double its continuous rating on a temporary peak in the music.

Double that was a really bogus number called “IPP” or Instantaneous Peak Power. The flimsy rationale was that an amplifier—if it had enough of a power supply—could probably muster about double its Peak power for the briefest of instants, if it had the wind at its back and you completely disregarded the distortion.

So a 30-watt/channel RMS stereo amplifier became a 60-watt/ch peak amp, which became a 120-watt IPP amp. Adding together the two channels, manufacturers would advertise, “240-watt Amplifier!” for a 30-per-side unit. Ugh.

In 1974, the FTC came in and mandated that audio amplifier power specifications had to state RMS/continuous power first, in the largest type, and that it had to be specified over what frequency bandwidth, at what THD distortion level, and both channels had to be driven simultaneously. Furthermore, the FTC mandated a warm-up or “preconditioning” period of an hour at 33% of rated power at 1kHz before measurements were taken.

The manufacturers hated this one, because 33% power is right in the heart of the least efficient operating range for typical Class AB amplifiers (which all of these were), and so the amps would run very hot during the preconditioning period. That necessitated large, heavy heat sinks for new designs, or sometimes a downgrade in power ratings for existing designs in order to meet the new requirements. For example, Dynaco—a well-regarded manufacturer of better-than-midgrade electronics—had to de-rate their popular SCA-80 integrated amplifier from 40 watts RMS per channel to 30 watts RMS per channel, because the unit ran too hot during the new FTC-mandated preconditioning period.

This particular Fisher receiver—if you read all the fine print really closely—eventually said that it was 28 watts RMS per channel, although it’s not clear if a distortion level or frequency bandwidth was ever specified.

But considered all on its own, the Fisher was a fine unit. 25 or 30 clean watts per side would easily drive any normal speaker of the time to more-than-ample loudness levels in the typical living room. It was an attractive, convenient, easy-to-use piece. It ushered in the coming stereo market expansion and deservedly takes its place among the industry’s most memorable receivers.

McIntosh 1900

McIntosh. The “Macs.” The generally-acknowledged best electronics of their time (apologies to Marantz separates fans), long before the Aragons and Brystons and Audio Researches and Jeff Rolands, et al. came on the scene.

Mcintosh 1900 Receiver

They were primarily a separates company but when they did finally come out with their first receiver—the tube/solid state hybrid model 1500—it was a big event. They followed that with the more solid state/less tube (tuner section only) model 1700, but with the model 1900 in the early ’70s, “Mac” finally entered the solid-state receiver market.

And what a solid, well-built, beautiful, high-performance unit it was. You didn’t judge Macs on a watts or features per-dollar basis. These were the Cadillacs of their day and price was just not part of the equation. In 1972, a Ford Galaxy 500 would take you to the store to buy milk just as well as any luxury car, but that’s not the point, is it?

At a list price of around $950, it was probably twice as costly—if not more—than similarly-spec’d receivers from mainstream companies, but the 1900 had an aura of solid quality that nothing else could match. Mac watts were somehow cleaner, more powerful, louder and more authoritative than those very pedestrian Kenwood watts coming forth from their KR-6160 receiver. In high school as my interest in stereo picked up steam, one of my classmates had (or I guess his Dad had) a “high end” system consisting of a Mac 1900, AR-3a speakers and a Thorens turntable with a separately purchased tonearm (probably an SME). The system cost over $2,000—this was an extremely expensive system in the early 70’s, real high-end. I remember he played the Isaac Hayes record “Shaft,” and the high-hat strikes that began the title cut were so realistic and sharp, I didn’t think anything could ever sound better. I was blown away. As a teen just getting into audio, that was an impression that has lasted to this day.

McIntosh electronics certainly had the cachet and their 1900 receiver did nothing to sully that reputation. It was quite arguably the first “high end” receiver of the modern equipment era, where the specs/price ratio was not as important as the build quality, company reputation, and perceived sonic superiority.

Pioneer SX-424 through SX-828 series

These were the receivers that launched the stereo college revolution of the ’70s. The line consisted of five models, from the SX-424 (15 wpc) to the SX-828 (50 wpc). They were beautifully-made, beautiful-looking units, with silver faceplates, wooden side panels, and heavily-weighted tuning flywheels that spun nicely from one end of the tuning dial to the other. Since younger aficionados are only acquainted with digital tuners, they’ve really missed out on one of the greatest tactile/high-quality equipment sensations of the halcyon era of stereo. (Another being the smoothly-damped, slow-opening cassette deck door—but that’s another story for another time.)

Pioneer was the standard-setter for mainstream receivers in that timeframe. Kenwood, Sansui, Sherwood and others definitely had some great equipment also—the budget-priced Sherwood S-7100A (20 wpc) being a particularly terrific value, a truly gutsy, great-sounding receiver. But the Pioneers were the benchmark units and their sales and marketing policies ensured they were the biggest sellers.

Vintage Pioneer Receiver

In the ’70s, all those millions of Advents, EPIs, JBLs and ARs blasting out Allman Brothers, the Who, Jimi and CSN&Y in beer-drenched, smoke-filled (never mind what kind!) dorm rooms across the country had to be powered by something. More often than not, they were made to sing by the clean, dependable, abuse-resistant power of a Pioneer receiver.

The SX-424 thru 828 model series was made from about 1971-1973. It was followed by the SX-434 series and then in 1976 by the SX-450 series models, the latter with their strikingly-gorgeous soft gold backlit tuning dial/power meter display area. Two of these later series units—one each from the 30 and 50 families—were so significant to the history and evolution of the high-fidelity industry that they’ll be called out on their own a bit later.

But for now, let’s remember the Pioneer SX-424 thru 828—the receivers that powered so much of the music of the Baby Boomers’ college-aged youth. From Joni Mitchell to Miles Davis to Santana—Pioneer was there.

Marantz 2230, 2245, 2270 series

No matter how good the accepted standard-bearer is in any field, there’s always something a cut above. Whatever it is, it has that something extra, a bit unexpected, a little better than it has to be. A better affordable family sedan than Toyota. A better chain restaurant than Olive Garden. The better ones exist because the company feels their customers will appreciate it and pay a little more for their product. Not too much more, but a little more and worth it.

Marantz 2270 Receiver

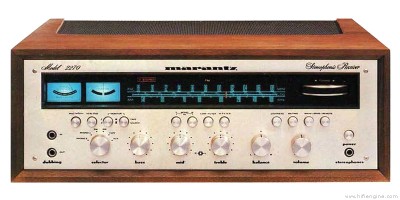

If the Pioneers (and by implication, the Kenwoods, Sansuis, Sherwoods et al.) were the benchmark for minimum-required excellence, then the Marantz 2200 series was the line that represented a cut above.

What distinctive and classy units they were. With their beautiful champagne-gold faceplates, their elegant black script control labelling and that striking deep blue tuning backlighting, the Marantz’s were certainly lookers.

But their tuning knob—who could forget that tuning knob? While everyone else chased the conventional spin-the-dial target, some brilliantly-inspired industrial designer produced the horizontally-oriented thumb-actuated Marantz-only tuning knob. With its black-knurled slip-resistant surface and heavily-weighted expensive feel, the horizontal tuning knob ensured that the Marantz 2200-series receivers were instantly recognizable and forever unforgettable, even to this day.

You didn’t see too many of these in random dorm rooms and fraternity house bedrooms. While we have no specific empirical sales data from that period showing the demographic distribution of its buyers, the strong suspicion here is that Marantz was the receiver for “grown-ups,” and the others were mostly for college kids. Whenever one did see or come across a 2230 or 2245 in someone’s room, it elicited an involuntary—and well-deserved—chorus of “ooohs” and “ahhhs.” No one ever ooohed and ahhhed over a Kenwood KR-5200. But a Marantz 2245 or 2270? Well, that was different.

Now—another tale of the Marantz 2270, and not a particularly flattering one. I went to college in Boston and was a member of the nationally-known Boston Audio Society, a group of enthusiasts who met monthly to discuss audio matters and get presentations, factory tours and demonstrations of manufacturers’ latest gear.

At the time—the mid-1970s—the whole notion of “why did amplifiers sound different” was really taking hold in audio circles. At a BAS meeting in the fall of 1975, we got a presentation from a test equipment manufacturer (I forget who) who was going to show us—prove to us—why some amplifiers sounded better than others.

They used three popularly-priced receivers of about the same power output—a Pioneer, an AR receiver, and a Marantz 2270. All three were rated at about 50-70 watts RMS/channel into 8 ohms, within about 1.4 dBW of each other. In other words, no real-life difference in loudness capability. I think the speakers for the demonstration were Advents or ARs.

When the receivers were pushed hard—to the edge of clipping, as we all watched on the scope—they sounded markedly different. The Marantz was clearly the worst.

These were the very early days of distortion spectrum analysis, and the presenter of the test equipment was showing us how an amplifier whose THD products are comprised of upper-order harmonics would sound much harsher and more strident than an amplifier whose THD products were the more benign and musically-related lower-order (2nd- and 3rd-order) harmonics. In other words, it wasn’t just the “total” of THD, it was what that THD was constituted of that really mattered.

We saw on the scope that the Pioneer had a reasonable spectral distribution of distortion products (a mix of lower- and upper-order distortion when pushed into clipping); the AR receiver was almost entirely lower-order distortion (2nd- and 3rd-order) and so its clipping was quite smooth-sounding and hardly noticeable, but the 2270 was a complete mess when it ran out of steam—virtually all 4th-, 5th- and 6th order distortion. Nails on the blackboard. (See THD vs IMD distortion sidebar)

This is not to denigrate the 2270, a well-built handsome powerhouse whose 70+ undistorted watts per side were more than enough to get a set of Large Advents far louder than any reasonably-sane college kid (or mature adult) could stand. It’s just an interesting recollection that must be included for the sake of historical accuracy and completeness.

Those High-Powered Pioneer Vintage Receivers!

Pioneer SX-1010

As the stereo market exploded in size among the college-aged consumer in the ‘60s and ‘70s, receivers became the dominant electronic component, supplanting the separate preamp/power amp configuration that was most popular among the middle-aged audio enthusiasts who comprised the majority of the market in the ‘50s thru mid-‘60s. Advances in electronic componentry, such as the widespread availability and low cost of reliable silicon transistors, made the design and manufacture of receivers feasible and popular. By combining three components—the power amplifier, preamplifier and tuner—onto a single chassis, using a single main power supply and only one cabinet, the manufacturer’s cost of production, shipping and warehousing plummeted.

Vintage Pioneer SX-1010 Receiver

Retail pricing could thus be lowered dramatically, and all this technological advancement fortuitously coincided with the emergence of the biggest population group in the country’s history (the baby Boomers, born between 1946 and 1964) and a sustained economic strong period that lasted almost uninterrupted for decades, from the late ‘40s right through the ‘70s.

The Age of the Receiver was upon us. And a Golden Age it was. Every year major manufacturers like Pioneer, Kenwood, Sony, Sansui, JVC, Marantz and Sherwood introduced new and better models, with more features, more power, and lower prices.

Still, no one had cracked the “magic” power figure of 100 watts RMS per channel. The 3-figure barrier seemed like an impenetrable wall, almost foreboding and sinister, as if something horrible would befall the party with the temerity to attempt it. It was almost like the fear that aviators had in 1947 about breaking the sound barrier before Chuck Yeager did it in the Bell X-1.

There was the safety of 40, 50, 55, 70 watts per channel. But no one dared to go to…..<gulp>….100. Remember, too, in those days 60 per side was considered more than enough. And since the reputable manufacturers in those FTC days were rating their equipment honestly and conservatively. It was a competitive badge of honor to see how far you could surpass your “published specs” in a review in a major magazine, then quote the reviewer in your next print ad saying, “The Kenwood XYZ easily surpassed its rated power specifications on our test bench, clocking an excellent……” 60 watts RMS was 60 really, really gutsy watts RMS.

Not like today, when most popularly-priced “100-watt x 7 channel” receivers sold at department stores are 100 watts at 1kHz with only one channel driven—not over the full 20–20kHz band with all (or even two) channels driven simultaneously (which really taxes the power supply, the output devices and the heat sinks), like they had to do in 1974. And too many of today’s receivers do it at a barely-hi-fidelity THD of 1% at 1kHz--not at the 1974 usual of .5% or .2%, over the full 20-20kHz range.

If you read the owner’s manuals of one of today’s popularly-priced “100 watt x 7” receivers very closely, you’ll likely find—in small print—a real rating of something like “60 watts RMS at .5% THD 20–20kHz, two channels driven simultaneously.” This is how they can still maintain FTC compliance.

Uh…..not exactly “100 watts x 7.” Hey, there’s no free lunch. Some lightweight 22-lb wonder selling for $299 is not going to miraculously deliver 100 watts RMS x 7 channels. The typical Kenwood or Pioneer 60-watt RMS x 2 integrated amplifier of 1978 weighed more than many so-called “100-watt x 7” midfi home theater receivers of today.

See: Trading Amplifier Quality for Features: A New Trend in AV Receivers?

Good example: The Yamaha RX-A1040 7.2 receiver. It sells for $1200. It’s a great receiver and does lots of great things. It even weighs 32 lbs, not 22. It says it’s “120 watts at 1kHz 2 channels driven at .9% THD—a THD level that would be laughed out of the room at this price level in 1976. Later on, it does say it’ll do 110 watts 20–20kHz, 2 channels driven at .06% THD—at 8 ohms. A 4-ohm continuous/RMS rating? Nope, nowhere to be seen. They do give us a Dynamic Power rating at 4 ohms—shades of 1963!—but no 4-ohm RMS rating. I guess no speakers these days are ever 4 ohms anymore, are they? Wait, there are 4-ohm speakers? But Yamaha, one of the “good” manufacturers, never rates their amplifier full-bandwidth 20-20kHz RMS 2 channels driven into 4 ohms. Inexcusable.

IM (Intermodulation) distortion? In the 1970s, every manufacturer spec’d it, and it was usually as low as THD. Today, it’s never even mentioned, and we’d hazard a guess and say that a sizeable portion of today’s audio enthusiasts can’t even define it or explain why it’s arguably more audibly-important than THD. That said, modern amps don’t really run into a lot of the same issues that older amps did and these days if the THD+N measurements come out good, then it usually implies good IM behavior. Still, in this author’s opinion, it’s good practice to measure IM, like Emotiva does.

That’s why 60 “1974 watts RMS” seems so much gutsier than 100 “2015 watts from a typical midfi AV receiver.” And that’s why cracking the 100-watt RMS per channel barrier back then was such a big deal. Especially in a mainstream receiver.

So it was in this technological and market-competitive climate that the industry’s very first 100-a-side receiver was introduced: The unforgettable Pioneer SX-1010. Stylistically, it was the top of Pioneer’s “30” series—the SX-434 through 939—but the 1010 stood on its own, both in model nomenclature and in its place in audio history.

Pioneer SX-1250

Once the Pioneer SX-1010 broke the 100 wpc barrier, the floodgates opened. Every manufacturer raced to come out with their own 100 watt-per-side unit. Kenwood, Sansui, Sherwood, they all had them, and they were all great pieces of gear. (Well, not the Kenwood KR-9400, which could be used as an “improvised explosive device” it was so unreliable. But Kenwood replaced it with the excellent KR-9600 in short order.)

Soon, 100 watts per channel wasn’t enough. It went to 105, 110, 125. The “power race” was on.

See: AV Receiver Power Ratings Trends: How and Why Wattage Ratings are Manipulated

In 1976, Pioneer once again blew everyone into the weeds, by introducing their 50 series (SX-450, 550, etc.) topped off by the incredible SX-1250, rated at 160 watts RMS per channel, 20–20kHz, at .1% THD. Not .5% or .25% (McIntosh’s old claim-to-fame), but .1% THD over the full bandwidth.

Pioneer SX-1250 Receiver

The 1250 single-handedly opened a new chapter in receiver history: the era of the ultra-high-powered receiver. Soon after, 160 was hardly enough to get into the game. The SX-1250 was replaced by the SX-1280 in 1978 which boasted 185 watts per channel, not at .1% THD over the 20–20kHz bandwidth, but .03% THD. Rated, advertised, guaranteed—.03%!

Then in 1979, Pioneer came out with the totally insane SX-1980, which upped the ante to 270 watts RMS per channel, 20–20kHz, at .03% THD. It weighed 80 lbs. It was 20 inches deep. It wouldn’t fit in any normal entertainment furniture.

But the biggest, heaviest, most powerful two-channel receiver ever was the Technics SA-1000. 330 watts RMS per channel 20–20kHz. .03% THD. Bigger and heavier than the Pioneer SX-1980. I never actually saw one in person and can’t vouch 100% that it actually materialized in the flesh on dealer shelves. But they announced it.

Technics SA-1000 Receiver

And then, mercifully, it was over. The receiver arms race that Pioneer started finally came to a peaceful, anti-climactic end.

But man, was it fun while it lasted!

Quadraphonic Sound: The New Analog Frontier?

Kenwood KR-9940

Two-channel stereo took an interesting detour in the early 1970s into Quadraphonic Sound, or “Quad” as it came to be known. Somewhere along the line, some marketing people thought that the next step toward realistic music reproduction in the home was to have four channels instead of two, with two additional rear channel speakers, a four-channel amplifier and four-channel software.

Kenwood KR-9940 Receiver

We’ll spare everyone the excruciating details of how mangled and misdirected the marketing and product development efforts became. Suffice it to say, this was the first great incompatible format debacle in the consumer electronics industry, a preview of what was to come with Beta and VHS, PC and Mac, Dolby Digital and DTS, Plasma and LED, and on and on.

Some of these format wars have had conclusive winners and losers, like Beta and VHS, or more recently HD DVD and Blu-ray. In others, the competing formats have learned to play nice with each other and even establish some degree of compatibility, like PC and Mac computers, where they now share cross-compatibility of Microsoft Office programs and both systems can do e-mail, web surfing, etc. and communicate with each other no problem. In some instances, the less popular format has simply faded away without any real harm being done to the end user (plasma TV owners can receive and enjoy programs just as easily as owners of LED TVs can, even if plasma eventually fades off into the sunset).

But four-channel audio was a disaster right from the start, both technically and marketing-wise.

To begin with, there were three distinct encode-decode systems: two matrixed/phase-differential systems, one called SQ and the other called QS. There was also a “discrete” system called CD-4 that was LP-based, requiring a special phono cartridge with response up to 40kHz, to correctly track the “pilot signal” in the grooves of the CD-4 record and send that signal to the decoder in the electronics.

SQ would sort of decode QS records and vice-versa, but CD-4 was totally incompatible with the other two. All three systems would fabricate some kind of phony multi-channel sound when playing a conventional 2-channel LP or cassette tape.

None of the three systems were particularly great-sounding, but far and away the biggest impediment to marketplace acceptance was that the record companies didn’t have anything close to a clear idea as to which of the three systems—if any—they should back. Sound familiar (remember DVD-A and SACD)? With the major recording labels taking a wait and see who the market winner will be before we commit to recording in that format attitude, there was no significant amount of popular software available for sale in the record stores. With no clear four-channel software winner in the stores (everything remained two-channel stereo), the electronics manufacturers were reluctant to commit to putting just a single format into their products.

A few of the mainstream electronics companies tried to get out in front of the situation and position themselves as the four-channel leader, in the hope that if Quad did become big, they’d be known as the ‘go to’ brand.

Pioneer, Sansui (who actually was a partial developer of the QS system, so they certainly had an interest) and JVC (who was involved with the CD-4 system) all had extensive four-channel receiver offerings.

But the Kenwoods were the most memorable. They had two complete four-channel families, the 6340/7340/8340/9340 followed two years later by the 8840/9940 series.

The KR-9940 was one impressive technological tour de force, a cover-all-the-bases unit that incorporated every four-channel encode-decode system there was into one top-of-the-line piece. 50 watts RMS x four channels, it could be “strapped” to 125 x 2 channels for straight stereo use. With its huge display window and impressive backlighting appearance it was a striking design, a receiver that looked and felt expensive and cutting edge.

Since the advent of digital THX-approved home theater, there have been many “super receivers” from the likes of Onkyo/Integra, Denon, Marantz, Pioneer Elite, Sony ES and others that incorporate every home theater technology there is and their huge front panels are covered in logos, switches, lights and buttons.

But all of today’s feature-laden, large, imposing-looking super receivers had their way paved for them by the multi-format four-channel Quadraphonic receivers from 40 years ago, and the Kenwood KR-9940 exemplified that genre perfectly. Hats off.

Yamaha CR-1020 series

The late 1970s–mid 1980s might be considered by some to be the last hurrah, so to speak, of the original stereo craze that began with the advent of 2-channel in 1958. Social/demographic trends were changing rapidly: the majority of Baby Boomers had left college and were now full-fledged adults, getting married, raising families and buying houses. Walkmans, boomboxes, answering machines and VCRs were taking a significant chunk of the available consumer-electronics-buying dollars, whereas 10 years prior, stereo gear was competing pretty much only with television. Music-only stereo equipment would continue to weaken and struggle in the years to come until home theater came along in the late 1980s/early 1990s like a knight in shining armor and rescued the hi-fi industry. Without the boost that the popularity of home theater gave to electronics and speaker companies, many of them would have simply gone out of business.

Yamaha CR-1020 Receiver

Despite the gloomy two-channel music times that lay ahead unbeknownst just a few years hence, the late 1970s–mid 1980s period was a great time for stereo components. Ground breaking, paradigm-shifting designs and technologies peppered the consumer landscape. The CD came of age. Components shouted “Digital Ready!” on their shipping cartons. Two-channel “Hi-Fi” VCRs brought 20-20kHz movie soundtracks into the living room for the first time, foreshadowing the home theater craze just over the horizon. The Bose AM-5 heralded in a new era in sub-sat speaker systems.

It was into this market environment that Yamaha introduced their family of elegant, beautifully-made, smooth-operating and great sounding “Natural Sound” receivers. Housed in beautiful teak or rosewood-colored wood cabinets, their distinctive, long thin rectangular control knobs would snug into the selected position with a satisfying, almost magnetic “thunk” as the knob was turned to the desired setting. Who knew simply going from “Phono” to “FM” could be so enjoyable?

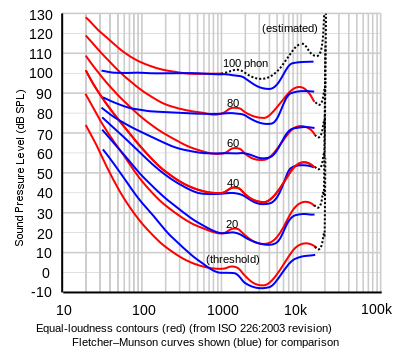

Another thing that Yamaha had going for them was their singularly intelligent Loudness control. For those of you too young to remember: The Loudness control was a setting on many receivers and integrated amplifiers of the time that attempted to compensate for the diminished audible sensitivity of bass at lower volume levels. Based on research by Harvey Fletcher and Wilden A. Munson in the 1930s, the “Fletcher-Munson curves,” as they’ve come to be known, are a set of ”equal loudness” frequency response curves over the 20-20kHz audible range—in other words, how much the bass needs to be boosted at lower loudness levels to appear to have “equal loudness” to the midrange (around 1000Hz).

Fletcher-Munson Curves

Most manufacturers incorporated a Loudness switch on their equipment that introduced a fixed amount of LF boost, so that program material would sound fuller—more realistic—when played at low levels.

The problem was that these loudness controls were fixed—a constant 7-9dB or so of boost below around 80Hz. Whether you were playing your program material at 70dB SPL (sound pressure level) or 100dB SPL, your Loudness button was boosting the bass by the same amount. At anything above very soft playback levels, a conventional Loudness control made the bass sound boomy and exaggerated—useless. It also could over drive the woofers in your speakers causing them to bottom out or even blow out.

Yamaha had a different and brilliant solution, a completely fresh, logical approach. It may have varied a little bit from product family to product family, but the basic Yamaha approach to loudness was this: The user would listen to some program material and advance the volume control to the loudest setting they were likely to ever use, then set the Loudness to “On.” At that point on the dial, the Loudness control’s effect would be zero, increasing in its effect as the volume was lowered. In other words, the louder the owner advanced the volume control, the less loudness compensation was applied. Hence instead of a dumb always on/off loudness control, we now had a “smarter” variable loudness. Unlike conventional Loudness circuits that applied a fixed amount of bass boost regardless of volume setting, the Yamaha way decreased the amount of compensation as the volume was advanced, going to zero compensation at the user-selected “full volume” position.

As implemented by Yamaha, the Loudness control worked beautifully, because it could be tailored to the specific listening habits of each individual user, in their room, with their speakers, according to their personal preference. “Natural Sound” indeed.

These were such nice receivers-- well thought out and with extremely high standards of performance and workmanship. Great values in their time, they still command focused attention and high prices in the used market even to this day.

Dolby Surround Receivers: The Next Generation

Sony STR-AV1020 and Pioneer VSX-4400

Stereo had run its course and was on the wane, fading away fast. 1958-1988 was a good run—30 straight years of growth for essentially the exact same industry, with the exact same retail delivery model. 1959 or 1971 or 1984, it made no difference. The customer walked into a retail electronics store (whether an independent neighborhood shop, a regional chain like Pacific Stereo or Tweeter Etc., or a “hybrid” store like Lafayette Radio that carried a mix of house brands and national goods), it was all very similar: A large open central area with system displays, featured equipment piled up high with a “Special!” price tag on top of the stack, a glass countertop display area with the cash register and the small items like phono cartridges, record cleaners, maybe headphones, etc. in the glass display case.

Off this open central area would be two or three “soundrooms.” How many would depend on the kind and size of store it was, but usually at least two—a low-to-mid-priced room, and a “high-end” room. These rooms were where the customer could do A-B comparisons of similarly-priced speakers and see the associated electronics and source units.

Sony STR-AV1020 Dolby Prologic Receiver

The whole process was pretty much the same for the manufacturer, the retailer and the customer for 30 straight years. The equipment changed, but the process stayed the same. Amazing, when you think about it.

However, stereo music listening was fading away as a major consumer leisure time activity and buying newer, fancier stereo equipment was becoming less and less important to the electronics-buying public. Video was the rage. Movie rentals, time-shifting recording of TV shows, camcorders—video was king all through the ‘80s.

Then the major electronics companies realized that VCRs—with their rapidly-spinning helical-scan video record-play heads—had an effective “tape speed” of several-hundred IPS (inches per second). This was necessary to be able to record the ultra-high frequencies of video signals. But it all of a sudden dawned on some clever product person that if they moved the audio heads from their fixed position inside the VCR’s chassis to the spinning position next to the video heads, then the audio heads—because of the ultra-fast tape speed—could now record 20-20kHz audio signals with ease. Voilà! The Hi-Fi VCR was born and videotapes with full-range audio made their first appearance. Then Dolby began to encode some rudimentary surround information on commercial VHS movies and when the audio companies responded with their first simplified Dolby decoding circuitry in their receivers, Home Theater became a reality.

As the 1980s drew to a close, the Home Theater revolution was on. Finally, the long-awaited marriage of audio and video was taking place.

Those early Home Theater receivers ushered in a new age of consumer usage and buying habits, and Home Theater involved the entire family in a way that male-only “audiophiliosis” never had. Add to that technological development the explosion in the housing market all through the 1990’s and the stage was set for Home Theater to make its mark on the consumer electronics landscape.

The very first Home Theater receivers utilized matrixed Dolby decoding circuitry that derived two channels of rear surround information via phase-differential synthesis from the two front stereo channels. This soon evolved into the Dolby Pro Logic (DPL) system, a “five-channel” (actually it was still two, with the center derived from the front left/right, but don’t tell anyone, ok?) encode-decode home theater format which utilized five speakers—a center-channel speaker placed on the TV, the left and right main front speakers, and two mono surround speakers (band-limited to 7kHz on the high end).

Dolby Pro Logic became the breakthrough format, the one that really put Home Theater on the map. Early DPL receivers around 1990 from Sony and Pioneer (such as their STR-AV1020 and VSX-4400, respectively) sold in droves and droves. Those two units deserve their spot on this list because of the pivotal role they played in popularizing the new Home Theater movement.

They were embarrassingly awful units in every possible respect:

- Cheesy, featherweight, plasticky construction.

- Busy, unattractive faceplates with far too many ill-fitting and lousy-feeling buttons and switches (long gone were the days of those wonderful-feeling weighted tuning dials of the Pioneer SX-950 and the great-feeling controls of Yamaha’s Natural Sound units).

- Tuners with FM specs and performance totally inferior from a sensitivity, selectivity, and capture ratio perspective than even the most pedestrian mid-1960s tuners.

- Unbelievably (I really mean “unbelievably”) low distortion specs—the main channels on the VSX-4400 were rated at .008% THD+N. It makes you wonder how much feedback they employed to yield this number.

And those amplifiers! Cheap, harsh, brittle-sounding amps (the Pioneer’s surround channels were just inexpensive ”chip” amps), with no real hi-fidelity pretensions whatsoever. As you cranked the receiver up with the surround channels on, you could see the front panel display dim as the power supply ran out of juice. Wattage and distortion ratings pulled pretty much out of thin air. “100 watts” over a completely unspecified bandwidth (they said “100 watts, 8 ohm”—what does that mean?), with no indication as to how many channels were driven simultaneously, at a level of distortion not tied into the frequency range—or worse, at 1kHz only—and they sounded just like they were rated: Terrible.

This was equipment not worthy of their company’s distinguished name. But they sold in huge numbers, as did similarly-awful equipment from Kenwood, JVC and many others.

However, one good thing did happen—Home Theater was here to stay, and even a Pioneer VSX-4400 powering a Bose AM-7 speaker system sounded far better than the little 2 x 3” speakers built into that Panasonic 32” CRT TV.

Yet as embarrassingly bad as these early home theater receivers were from a looks, feel and actual audiophile performance standpoint, the mainstream electronics manufacturers got their respective acts together in astonishingly short order, and phenomenally capable, full-featured, high-build-quality units quickly became the order of the day.

Discrete Surround Sound: The Digital Era Takeover

Onkyo (and their higher-end offshoot, Integra), Marantz, Harman Kardon, Yamaha and many others were soon offering home theater receivers with a features/performance-to-price ratio the likes of which had never been seen in the consumer electronics industry.

The Marantz SR7400 from 2003 was typical of these units: For a retail price of only $739, the customer got a full-featured 6.1-channel receiver, with Dolby Digital, Dolby Pro Logic II, DTS, and pretty much every other then-available surround decoding format.

Marantz SR 7400 Dolby Digital/DTS AV Receiver

The amplifier section—although not adhering to 1974 FTC ratings standards—was rated at 105 watts RMS per channel. It was a fully discrete design (not a chip amp) that could handle reasonably low impedance speakers and it didn’t have the brittle, harsh sound character of the Sony SR-AV1020 or the Pioneer VSX-4400. Onkyo actually advertised their receivers’ low-impedance drive capability with the amusingly naïve acronym WRAT, which stood for Wide Range Audio Technology. (Obviously, no one in Onkyo’s home office in Japan recognized that “WRAT” might not be the best-sounding name they could use in an English-speaking country!)

The FM tuner sections in these receivers were admittedly pretty mediocre, but that simply reflects the market reality that in the last 10-15 years, “serious” listening to FM broadcasts has all but disappeared. There is no longer any need for manufacturers to spend the money on 1980s-era FM performance.

These modern-day popularly-priced home theater receivers are a high water mark in the history and evolution of our business and the heft, features and performance packed into these units for the price is nothing short of amazing. They should be fully recognized and appreciated for the noteworthy achievements that they are.

From that point on, things got really interesting, as the Age of the Super Home Theater Receiver was now upon us.

Denon 5800 THX AV Receiver

MSRP: $3800 in 2001

This was a truly fine unit, the first of many super Home Theater receivers to come, from Denon, Pioneer Elite, Sony ES, Marantz, Onkyo and Integra and many others. All of these units boasted an incredibly comprehensive feature set compatible with every then-current format, amazing build quality and excellent decoding performance.

The Denon 5800 (and the others) had outstanding audio performance as well—but, interestingly, they were not even close to spec’ing and adhering to 1974 FTC amplifier performance standards. The problem here was that 1974 FTC power rating standards applied to 2-channel “open” amplifiers only (not part of a system), and those standards were never amended/updated to multi-channel amps, Home Theater receivers or ”closed” systems like powered subwoofers, computer speakers or powered monitors.

The smart thing for the FTC or some other administrative body to have done would have been to call for the manufacturers of multi-channel amplifiers to rate their equipment with at least two channels driven simultaneously from 20–20kHz or perhaps with two channels and a 3rd “associated” channel (like the center) to all be driven to rated power, 20–20kHz. A rationale could have been made that receivers’ internal amplifier channels only had to be rated down to 40 or 50Hz, since the external powered subwoofer would cover below that frequency point and the receiver’s amplifiers would never actually be tasked with reproducing bass below 40 or 50Hz—maybe even nothing below 80Hz.

But….no such standards were forthcoming, so even manufacturers of these “super receivers” took to inventing ratings and distortion claims, just like in the 1963 Fisher unit or in those horrendous 1989 Sony and Pioneer units.

At no point does the Denon 5800 ever rate its amplifier section from 20–20kHz with all 7 channels driven simultaneously. Its advertised specification of “170 watts x 7” is not met to 1974 FTC standards and no explanation or apologies are given. This is not to fault the Denon—it’s because the FTC does NOT demand all channels be driven simultaneously over the full bandwidth for the power rating for more than two channels. Remember their mandate was for two-channel stereo receivers, not multi-channel AV receivers.

This is the proof that today’s 170 watts x 7 is often not like 170 watts x 2 in 1976. However, this is not to denigrate the Denon 5800-series of receivers, which are fabulous receivers and can outperform their stereo-era predecessors in terms of low noise and low distortion amplifier behavior. But unfortunately, many modern multi-channel receivers cannot outperform their stereo-era predecessors in amplifier performance. That many modern receivers have amplifiers that are inferior to their mid-‘70’s counterparts is a biting comment on how our industry’s required amplifier measurement standards and performance expectations have slipped.

IM (intermodulation) distortion? As stated earlier in the Pioneer SX-1010 section, there is nary a mention of it by Denon. Some people will give you a long, well-reasoned, strongly-defended list of reasons why IM measurements are not really needed any longer and there may well be other measurements that are more revealing and relevant in modern amplifiers. Nonetheless, there is no indication that Denon’s marketing/product management people have any awareness or understanding of it. The engineering is top-notch, because the Denon 5800 is undoubtedly a good performer with respect to IM distortion, since rest of unit is so well-designed and well-behaved. But IM—audibly more important than THD—is not even acknowledged to exist by its marketing and product management people. (See distortion sidebar.)

Here are Sound & Vision’s findings from their June 2001 review of the Denon 5800:

Output at clipping (1 kHz into 8 ohms)

one channel driven 187 watts................................ (22.5 dBW)

five channels driven 138 watts............................... (21.5 dBW)

Distortion at 1 watt (THD+N, 1 kHz, 8 ohms)................. 0.037%

Note: Five channels driven. (It’s a seven-channel unit.)

THD+ N @ 1kHz, but no IM. Where are the full bandwidth power and distortion measurements?

This is not to detract from the overall excellence, superb build quality and amazing overall capability and flexibility of this unit and others of its ilk. In operational respects, it’s even better—by a wide margin—than its predecessors from the stereo era, because the 5800 has to do so much more and anticipate so many more user options, all the while keeping its operation intuitive and straightforward.

If the 5800 had simply been rated at say, 150 watts RMS per channel, any two channels driven simultaneously, from 20–20kHz at not more than .1% THD (which it undoubtedly could do, quite easily), then there wouldn’t be any question or quibble.

But Denon (and all its similar cousins in the marketplace) gets two demerits for claiming “170 watts per channel x 7,” and then not being able to deliver. Shame on them, shame on the FTC for dropping the ball, and most of all, shame on the print magazines and shame on this generation of aficionados for either not knowing the difference, or worse, not caring. Audioholics.com appears to be a lone wolf measuring full power bandwidth and distortion these days.

See: Audioholics Amplifier Measurement Standard

Editorial Note about FTC Amplifier Testing

The FTC also issued guidance concerning the testing requirements for measuring the power rating of multi-channel amplifiers, noting that at a minimum the left front and right front channels of multi-channel amplifiers are associated under the rule. It would therefore be a violation of the rule to make power output claims for multi-channel amplifiers utilized in home entertainment products unless those representations are substantiated by measurements made with, at a minimum, the left front and right front channels driven to full rated power. Members of the FTC did try to mandate some sort of All Channels Driven Specification but it never materialized. It is unclear if the FTC actually enforces this ruling which is why it's always a value add when 3rd party review organizations like Audioholics verifies amplifier manufacturer power claims.

See: FTC Amplifier Standard for Multi-Channel Amplifiers for more information.

When Parasound or McIntosh rate their 3-and 5-channel Home Theater power amplifiers correctly with all channels driven 20-20kHz, it’s voluntary, not because the FTC is telling them to do so. They know their customers are knowledgeable and expect the proper ratings, so they do it right.

Many well-respected people will say that the All Channels Driven issue is not relevant in Home Theater, because there is never a situation when the program material taxes all 5 or 7 channels to deliver maximum power simultaneously.

See: The All Channels Driven Amplifier Test

That is indisputably true. But it is this author’s opinion that citing power with 2-channels driven simultaneously over the full 20-20kHz bandwidth at a stated level of both THD and IM distortion should be the minimum requirement for any true high fidelity amplifier. That’s my position. Others are free to disagree.

Honorable Mention

Yamaha DSP-A3090 Integrated Amplifier

MSRP: $2,000 in 1999

Like the 10 Most Influential Speakers article, this is an unapologetically American-centric article. For most of the 20th century, stereo receivers were almost completely an American market phenomenon. The rest of the hi-fi world seemed to like separates or at least integrated amplifiers better than all-in-one receivers.

Integrated amps are an interesting category of product. With two of the three main sections (the pre-amp and power amp) sharing a common chassis and power supply, integrated amps strike a nice economical and space-saving balance between the no-compromise performance of three totally separate units and an all-combined-in-one AM/FM receiver.

In my youth, FM radio was hardly a consideration to us; what we wanted to do was play records. So my friends and I all put together our systems based around integrated amplifiers: Kenwoods, Dynacos, Pioneers, Sansuis, etc. Some of us added the matching tuners at some point later on, but many of us never did.

Yamaha DSP-A3090 Dolby Digital Integrated Amplifier

A manufacturer has a bit more marketing flexibility and pricing/cost leeway in an integrated amp than in a receiver. Receivers were more closely tied into “magic” price points ($299, $399, $599, etc.), whereas integrateds could be priced at more or less whatever their power rating and feature set seemed to justify.

So it is with our can’t-ignore-it Yamaha DSP-A3090 integrated home theater amplifier. Yamaha decided to use the integrated amplifier physical format to build an incredibly comprehensive, forward-thinking, user-friendly home theater centerpiece that combined every cutting edge decoding format available at the time along with some extremely thoughtful and innovative touches of their own.

The DSP-A3090 featured bona fide Dolby Digital 5.1 decoding (the first unit to have DD decoding built in), as well as Dolby Pro Logic. Its five channels of amplification were rated at 80 watts RMS/Channel, all 20-20kHz at <0.015% THD. Very impressive. It’s power supply was as large as some of today’s 7 or 9 channel AV receivers. It could do a full 80wpc x 5 with all channels driven.

It offered two additional front channels, called “effects” channels, at 25 watts per channel. This was clearly way ahead of its time, way before ‘height’ or Atmos or anything else that has come along in the last 15 years or so. Yamaha lead the way with DSP and eventually proposing front/back height channels before anyone else. Their 11.1 CH speaker layout proposal of their RX-Z11 back in 2007 before discrete 3D immersive surround formats were even thought of.

See: 3D Immersive Surround Formats and Loudspeaker Layouts

There was a built-in parametric equalizer, which allowed a knowledgeable user to make fine, subtle tonal adjustments to correct for room acoustics issues or program shortcomings.

And there was an entire roster of additional DSP digitally-derived acoustic environments like Pavillion and Jazz Club and Pop/Rock. Unlike many of the DSPs on other brands’ units that had been tried before or would come later, Yamaha’s sounded good. Really good. I remember being over at my older cousin’s house listening to a Buddy Rich big band jazz concert through his DSP-A3090 and DBX Soundfield main speakers and Velodyne sub. (I think all his other speakers were small ARs, but I forget.) He’d applied some digital effects to the playback (I think it was “Concert Hall”) and man, did it sound good! Switching off the effects flattened out the sound and made things sound boringly two-dimensional.

I have rarely been so impressed with a piece of electronics as I was with the Yamaha DSP-A3090. Gobs of super-clean power. Real digital decoding. Extra front effects channels that added realism, not gimmickry. Intelligently-done EQ. Acoustic space settings that actually sounded good and added to the listening experience.

Beautiful appearance, superb feel and heft, combined with silky controls.

$2,000? A bargain. A steal, even.

If it’d had a tuner built in, it would be the best receiver of all time. But receiver or not, I have to mention this guy.

Conclusion

So

there you have it—a highlighted view of some the most memorable and important receivers

of the last half-century or so. As America lived through the Kennedy

administration, the Vietnam War, Woodstock, the Bicentennial, the dawning of

the digital age, the end of the Cold War, Y2K, and the rise of the Internet and

Social Media, one thing remained constant: our love of music and movies. These

are the receivers that brought that to us.

So

there you have it—a highlighted view of some the most memorable and important receivers

of the last half-century or so. As America lived through the Kennedy

administration, the Vietnam War, Woodstock, the Bicentennial, the dawning of

the digital age, the end of the Cold War, Y2K, and the rise of the Internet and

Social Media, one thing remained constant: our love of music and movies. These

are the receivers that brought that to us.

What do the next 50 years hold? Will we even recognize the form factor of the home entertainment system in 2065? After all, both 1963’s Fisher 500-T and 2015’s Denon AVR-X7200W are still undeniably receivers—the same basic size and shape, with control knobs and speaker terminals and AC cords.

Will Bluetooth and other wireless/streaming technologies to come render the hard-wired receiver irrelevant? Will “soundbar”-type units that cater to the ever-increasing craving for convenience and the desire to minimize domestic living space clutter replace the receiver (and its associated separate box or in-wall/in-ceiling speakers) altogether?

It’ll be interesting, that’s for sure. If I’m so lucky as to be here in 2065, I’ll write another article. Stay tuned.

Reminder: Please be sure to check our our THD and IMD Distortion Sidebar on the next page if you haven't already.

Please also participate in the related discussion on our forum about this article and let us know your most memorable receiver experience.

THD and IMD Distortion—Sidebar

The term “distortion” is used pretty frequently in audio, but it’s not understood particularly well. There are different kinds of distortion, and some is more audibly bothersome than others.

By pure definition, if the output of an audio device differs in any way from the input, that difference is “distortion,” because theoretically, the device’s output should be a perfect mirror of the input signal. Nothing more, nothing less.

Well, unfortunately, this is the real world, and except for former Heavyweight Champion Rocky Marciano’s

49-0 record, nothing is perfect. Audio equipment produces distortion, and this distortion falls into two main categories—harmonic distortion and intermodulation distortion.

We’ll explain both kinds

(in simple terms, don’t worry!), and then you’ll know more than your friends.

Harmonic Distortion

This distortion is defined as unintended signal products generated by an audio product that are whole number multiples of the original signal. For example, if an audio device is asked to reproduce a 40Hz signal, and instead produces 40Hz and a small amount of 80Hz + 120Hz + 160Hz + 200Hz, the 80Hz + 120Hz + 160Hz + 200Hz product is called harmonic distortion. Small amounts of distortion close in multiples to the original signal (“lower-order harmonics,” like 80Hz) are barely audible; larger amounts of distortion in greater multiples far away from the original signal (“upper-order harmonics,” like 200Hz) are grossly objectionable to the human ear.

The sum total of all harmonic distortion products is expressed as a percentage of the original signal, or % Total Harmonic Distortion (% THD).

The human ear is most sensitive to midrange frequencies, so small amounts of distortion (less than 1%) in the 500-2000Hz range are clearly audible.

We’re pretty insensitive to harmonic distortion in the bass range, so even 5–10% THD in the 20–60Hz region is usually not objectionable, as long as the device (such as a subwoofer) is not exhibiting other forms of audible misbehavior as well (mechanical scraping, buzzing, port ‘chuffing’, etc.).

(See figure 1.)

Illustrations courtesy of the Joshua Cooper Company

Intermodulation Distortion

This is a bad one, because—unlike THD—it’s not harmonically-related to the original signal at all, so even very small amounts are audibly objectionable. IM distortion is when the distortion products occur at frequencies that are sums and differences of the original input signal. For example, if the input is 500 Hz and 2200 Hz, then the IM distortion products will occur at 1700 Hz and 2700 Hz. These frequencies have no musical relationship to the original frequencies at all, so the sound is very harsh and discordant.

(See figure 2.)

Illustrations courtesy of the Joshua Cooper Company

Now when you read those THD and IM distortion specifications, you’ll know what they mean.

A Note about THD + N vs IMD Distortion Testing

By. Gene DellaSala

Back in the early days, amplifier power over a wide bandwidth was lacking by today’s standards. Output devices switched much slower and thus were subject to slew induced distortion (high distortion at high power due to decreased product bandwidth). As a result IMD testing was often the best way to measure and characterize this behavior since it was a more revealing way of testing bandwidth limited devices at their upper frequency limits. THD+N tests would be unrevealing in these cases since the generated harmonics would exceed the bandwidth of the Device Under Test (DUT). A good example would be a DAC whose sampling frequency is 8kHz and you'd want to test how accurate it was right up to its usable bandwidth which in this case would be 4kHz (1/2 Nyquist) and clearly within the range of human hearing (20kHz). When testing highly bandwidth limited systems containing A/D and/or D/A convertors, IMD testing (using DFD or twin-tone) is the ONLY way to measure non-linearity above about 50% of the system bandwidth. THD+N measurements above 50% of the system bandwidth will simply reveal nothing about non-linearity because the harmonics will fall outside of the system bandwidth.

Today we have much better (ie. wider bandwidth, faster) output transistors which allow audio amplifiers to have extremely wide bandwidth (>100kHz) octaves above the limits of human hearing. Thus, THD+N testing can typically give us all of the relevant data we need if done properly. This involves testing the amplifier full bandwidth (20Hz to 20kHz) at low and high power to determine consistency of performance. If bandwidth is preserved and the distortion remains low (< 1% at rated power) than this is usually a good indication that the amplifier will also perform similarly well under IMD testing. It’s also very useful to check amplifier distortion using a FFT analysis (1kHz fundamental) and observing the spectral content from the subsequent harmonics generated or any ill behavior caused by ground loops or unwanted out of band noise.