Comb Filtering, Acoustical Interference, & Power Response in Loudspeakers

Comb Filtering and Acoustical Interference are two audio terms that relate to the manner in which two or more sound sources (such as two speakers or two drivers within a speaker system) interact and affect each other. The importance and audibility of these phenomena are the subject of this article, and they are a source of a continuing difference of opinion among well-respected equipment designers and acoustic theorists/researchers.

Before we debate the issues surrounding Comb Filtering and Acoustical Interference, it’s prudent to first clearly identify how these two terms since more often than not people just lump both phenomena into the term comb filtering. Although they are both similar, it’s really more accurate to break them into two terms based on the listening conditions we are setting out to describe as follows as follows:

Comb Filtering: This is basically a delayed version of a primary signal that is produced when two or more loudspeakers are playing the same signal at different distances from the listener. In any enclosed space such as a music or theater room, listeners hear a mixture of direct and reflected sound. Because the reflected sound takes longer to reach our ears, it constitutes a delayed version of the direct sound and a comb filter is created where the two combine at the listener. The extent of its audibility depends on how lively the room is to allow the reflected sounds to average out the overall response. Note that this interference may be constructive (additive) or destructive (subtractive).

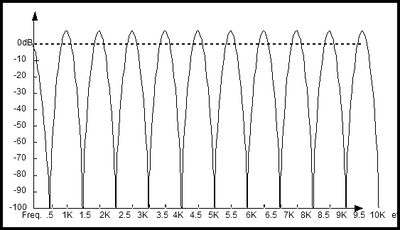

Acoustical Interference: This phenomenon occurs when a single sound source such as a loudspeaker shares the same bandwidth across multiple drivers within the cabinet separated by a physical distance greater than the wavelength of propagation. If the interference is destructive at some given frequency, it will also be destructive at multiples of this frequency. This gives rise to a graph such as shown below, which takes on the appearance of a comb. The extent of audibility depends on the position of the listener relative to the speaker and the physical distance between the offending drivers relative to the lowest frequency the commonly produce.

In order to generate the high degree of cancellation shown, the sound sources must generally be within the same enclosure, for reasons to be explained later. This is why the term “acoustical interference” which is really a subset of comb filtering is generally applied to the behavior of a particular speaker, whereas “comb filtering” is applied to the behavior of a set of speakers.

Acoustical Interference / Comb Filter Measurement

Comb Filtering is also known as “picket fencing,” but this is due to the appearance of the above graph. This should not be confused with radio wave multipath, which results in reception coming and going in response to signal variations as the receiver (your car radio) is traveling. This combine-cancel-combine-cancel phenomenon is known as “picket fencing,” because if the listener moves across the soundstage, it sounds like the “rat-tat-tat-tat” as you drive past a picket fence in a car with the window open.

Acoustical Interference pertains more to where a common signal in the same frequency range is reproduced by two physically separate sources, such as two drivers operating in the same frequency range, or the frequency overlap of two drivers in the crossover region. If the drivers are separated by a distance greater than ¼ (90 degrees) wavelength of the highest frequency being reproduced, then the time/distance issues that manifest themselves at those frequencies mean that at some angles relative to directly on-axis, their outputs combine in phase, and at some angles, their outputs cancel out of phase.

Should You Run Two Pairs of Loudspeakers? - YouTube Discussion

Audibility of Comb Filtering & Acoustical Interference

There seems to be two schools of thought on the topic of comb filtering. Some engineers use this term interchangeably with acoustical interference implying the audible effects of comb filtering between a pair of speakers playing in a room is similar to multiple high frequency drivers spaced further apart than their common wavelengths of operation in the same cabinet.

School #1: It’s Audible

Some believe comb filtering is harmful and avoid designing multi-driver loudspeaker systems that share the same bandwidth of operation thus featuring a single woofer for bass, single midrange for vocals, and a single tweeter for the highs. Their argument is that having one driver over a specified bandwidth is better than having multiple drivers performing that task. There is nothing wrong with this approach but if more output and higher sensitivity is required, there is absolutely no reason why multiple woofers cannot be employed in the design. Not only will comb filtering not be an issue at these lowest frequencies, but the overall system distortion will be reduced because for every doubling of identical bass drivers, each driver will only have to work half as hard for a given output level.

Editorial Note on soundwave behavior:

λ = C/F (The wavelength of the sound is equal to the speed of sound divided by the frequency of the sound). All pure tones have a 360 degree cycle which can be represented by the height of an arrow above the horizontal axis as the arrow rotates at a constant speed proportional to the frequency in a complete circle). Bigger arrow = bigger amplitudes, and faster rotation = higher frequency. If the arrow starts ON the horizontal axis, and spins one complete circle, that is 360 degrees of rotation. That is a very simple explanation of phase. (Good enough for us right now).

School #2: It’s NOT Audible

On the flip side there is an alternative viewpoint that comb filtering is “inaudible” and nothing more than a measurement artifact. Saying comb filtering is inaudible is a bit like saying cars can go fast. It doesn't tell us much. To qualify that statement a bit more, it is possible that with a complex signal like stereo music, in a normal room with a good amount of reflective energy, those dips that occur from comb filtering are impacted by the mathematically inevitable fact that at some frequencies in some locations one speaker will be pushing the air (in phase), while simultaneously the other is pulling the air (out of phase). You might not perceive the dips as abnormal or undesirable. The problem with this theory of course, is that in a real room it is impossible to separate the two. If we put two speakers at some distance apart and play stereo, what we hear will no doubt include the effects of comb filtering from the room.

This will not be or sound as bad as for example, the out of phase behavior that occurs when one uses two tweeters in a system, separated by a distance which is significant relative to the wavelengths they radiate. (Wavelengths decrease with increasing frequency: High frequencies have short wavelengths, and low frequencies have very long ones.) For this reason it is quite possible to mount two 12” subwoofers side by side, or even a few feet apart, and have them act as one speaker. At 2000 Hz, where most two-way woofer and three-way midranges are playing, that wavelength is only 6.78 inches, meaning 180 degrees is half of that or 3.39 inches. As the frequency increases, the wavelengths become proportionally smaller. (The wavelength at 4000 Hz is half the size of 2000 Hz). The formula for calculating the wavelength in inches is 13560/frequency in Hz. So 13560/2000= 6.78 in for a 2000 Hz frequency.

For example, if we are 3.39 inches farther from the left speaker than the right speaker, we should expect a cancellation in the sound at 2000 Hz. (180 degrees out of phase). Again at 6000 Hz, where we are now 540 degrees out of phase (180 plus one full cycle) so we can expect another dip in the response. At 10,000 Hz, we are now 900 degrees out of phase (180 plus two full cycles).

So, if at some distance from the acoustic center of each speaker, we are exactly as far away from one as the other, and if the outputs at our listening location are not dominated by room reflections, then their outputs can be expected to combine in phase for up to a +3dB increase in SPL.

However, if we move off to an angle on one side, we have changed the distance that wave must travel to reach us, so the phase between the two sources will no longer be in perfect synchrony. If the phase difference is 90 degrees, there is seen a phase change (relative to either speaker alone), and up to a +1.5dB SPL change. At 180 degrees out of phase, we should expect a complete cancellation of the sound. Since real rooms at distances of more than a few inches away are predominated by room effects and reflections, this complete cancellation does not happen in practice. What this means in actuality is that one will have a frequency response which—even if it started out as a flat line with one channel playing—once we turn on the second speaker, our composite frequency response may then look like anything but – although it may well still sound just fine.

Editorial Note: The Math behind adding signals out of phase

Let's add two vectors, C1 and C2. First one (reference, in phase) is C1 = 1 <0 ; second (90 deg out of phase) is C2 = 1 < 90.

Converting to rectangular form gives C1 = 1 + j0 ; and C2 = 0 + j1.

Adding gives C1 + C2 = (1 + 0) + j(0 + 1), which is 1 + j1

Converting to polar gives Magnitude = square root of ( 1squared + 1squared ), which is 1.414

and phase = arctangent of 1/1, which is 45 degrees

and this is 1.414 <45

Converting magnitude to dB gives db = 10 log 1.414, which is 1.504dB

It’s important to note that this description applies only to a microphone, or to a one-eared listener. Humans have two ears separated by an acoustical obstacle, the head, and therefore are somewhat prepared to deal with the issue because the interference peaks and dips occur at different frequencies in each ear. Perception in this case is determined by what is called a “central spectrum” – a cognitive (involving thought) combination of the sounds at both ears. Hence we begin this discussion with a problematic relationship between what is heard by humans with two ears and what is measured by a single microphone.

Now if those two tweeters are very close, the difference in arrival time at your ear is so small, you cannot distinguish the sound from one as direct, and the sound from the other as indirect or coming at you from an entirely different location. Thus we cannot equate the two phenomena, (room reflections and acoustic interference) and declare, “Comb filtering is inaudible.” What we can say is there are some people who misapply the studied science of comb filtering. Multiple sound sources in a room separated by a large distance vs. acoustical interference issues of single source loudspeaker producing sound from multiple drivers (separated by a relatively large distance compared to the wavelengths they are producing) are two different phenomena and should not be confused or used interchangeably despite their similarities. This is akin to announcing there is no difference between direct and reflected sound, and all sound perceived as echoes are simply artifacts, not something you can actually hear.

AR “Dual Dome” assembly

AR was the first major speaker company to recognize this phenomenon, with the invention of the “Dual Dome” midrange-tweeter assembly in the 1982 AR-9LS. 1978’s AR-9 introduced the first intentionally vertical array of midrange/tweeter drivers, to eliminate interference between the drivers in the horizontal plane. But AR realized that there was still interference between the upper range drivers in the vertical plane as well, because of the physical distance separating the upper midrange dome and the tweeter. So AR produced the “Dual Dome” unit which mounted the 1 ½”upper mid and the ¾” tweeter domes very close to each other—well within the 2” wavelength of their 7000 Hz crossover frequency—by building a massive magnet assembly with two VC carriers which drove both domes at once. Thus, the upper mid and tweeter domes operated as a single acoustic unit, with no horizontal or vertical acoustical interference or picket fencing throughout their combined frequency ranges. That was 30 years ago, but few manufacturer really pay much attention to that degree of detail anymore.

A programmer by the name of Paul Falstad has actually written a program to allow a PC user to hear acoustic cancellations, and has the utility online, and best of all, for free. For those of you adventurous enough to test the theory yourselves, you can go here to find a link to the program.

http://www.falstad.com/mathphysics.html

http://www.falstad.com/interference/

Now someone is going to rightly point out (as Paul Klipsch did many years ago) at a high enough frequency, your ears are many degrees out of phase. True enough. That we do not hear like a microphone measures, is not in dispute. Our ear, will take the amplitude of the varying wave, and integrate it over time. We don't hear 2000 Hz as 2000 separate pushes and pulls of the air. What we hear instead is a constant tone, despite the fact that the pressure at our ears is changing very rapidly. So, how does the ear brain mechanism deal with this? Are we simply incapable of hearing it? No. Below about 1-2 kHz the ear/brain progressively becomes able to track the waveform. At higher frequencies it cannot, but the ability to recognize pitch accurately is uncanny, so there is more going on than meets the eye. At high frequencies we track envelopes, and this allows us to localize amplitude-modulated and transient high frequency sounds with great precision – in fact it is the dominant factor in sound localization in rooms. So, although we don’t track the detailed structure of high-frequency sounds, we do track the modulation envelopes. Much of the perceptual “averaging” that happens when listening to music exists because music is not a stationary signal, and there is an ever-changing pattern of inputs. What we listen to is music, filled with many frequencies at once (which are constantly changing, and even have vibrato, so the interference patterns never really stabilize long enough to be clearly audible), in a room where reflections create a similar kind of alternating plus and minus of the amplitude of the signal that reaches us. It is because we listen to music in reflective rooms, that we have become accustomed to this effect. If the music signal's Q is 0.1 (wideband) while the effect of the comb-filtering is to spread the dips apart at narrow intervals (Q = 20 for example), then this will not predominate over what we hear. (In other words, our ears are averaging the energy that reaches them not only over a specific time, but over a specific range of similar frequencies!) If we as humans were equipped to hear all these acoustical effects as destructive, our voice and sound recognition system would be so fragile and sensitive to the environments in which we found ourselves, we would probably have been unlikely to survive as a species.

What direction is the danger coming from? I can hear mom calling, or is that someone else's mom? With a narrow enough steady state as opposed to transient signal, we can demonstrate that comb-filtering is audible. If a transient is very brief, the direct sound will come and go before a reflection may arrive and produce no interference. Traditional frequency response measurements end up being “steady state” so they don’t necessarily depict what we will hear with real program material.

In a non-anechoic room, we hear an increased sense of space due to reflections from the separated stereo pair of speakers. It is an effect of real rooms we deal with in everyday life when listening to sounds that are rather wideband in their energy distribution vs. frequency. Every room has a multitude of reflections and peaks and dips. There is no survival advantage for us to be able to hear them all, so the ear-brain tends to average the SPL (peaks and dips both) over a given bandwidth averaging the amplitude of the signal. Multiple tones within a critical bandwidth are not identifiable as separate tonal events but they do beat together at rates determined by the frequency differences. At low frequencies we have all heard this as the cyclical tone; at higher frequencies it is called “roughness” and is the basis for “rosin on the bow” kinds of sounds, which are important aspects of music.

Assuming this is true, what it means is that our ear-brain mechanism is going to average those peaks and dips together, so assuming their distribution is evenly spaced, the effect on the tonal character of music will be negligible. (Assuming the music signal is wideband, and not very narrow in bandwidth like a pure tone) Something to remember is that interference peaks and dips do not ring. Resonance peaks ring, and therefore are the things we easily hear. That is also why, in measurements it is essential to do spatial averaging so that one can identify whether a peak is due to a resonance (get busy and fix it) or interference (it may or may not even be audible).

Audibility of Acoustical Interference & Loudspeaker Design Implications

For very high

frequencies, the loudspeaker designer must pay extra care in keeping multiple

tweeters positioned as closely together as driver size and cabinet

configuration will allow. If the

speakers are allowed to operate over a range above where the physical distance

between the sources is large compared to the wavelengths of sound, then

acoustical interference starts becoming a problem (ie placing tweeters on

opposite ends of the cabinet as pictured here). Serious designers making top shelf products will avoid making this mistake. Luckily you won't find these type of speakers on the market anymore but in the 70's and early 80's they were pretty commonplace.

For very high

frequencies, the loudspeaker designer must pay extra care in keeping multiple

tweeters positioned as closely together as driver size and cabinet

configuration will allow. If the

speakers are allowed to operate over a range above where the physical distance

between the sources is large compared to the wavelengths of sound, then

acoustical interference starts becoming a problem (ie placing tweeters on

opposite ends of the cabinet as pictured here). Serious designers making top shelf products will avoid making this mistake. Luckily you won't find these type of speakers on the market anymore but in the 70's and early 80's they were pretty commonplace.

It's important to note that multiple tweeters in a loudspeaker is NOT necessarily a bad thing. Some designers employ multiple woofers and tweeters in such a way to average the lobing response errors which can result in more consistent sound off axis and thus provide a wider listening area. (the argument is there are so many peaks and dips that they should average themselves away). This can be seen in the example below for the RBH Sound SI-6100/R in-wall speaker.

The audible

effects of comb filtering between different radiating sound sources playing the

same program material vs acoustical interference between multiple drivers of

the same sound source are dependent upon loudspeaker driver and cabinet design,

room design, and listener position, distance from the sources and the program material being reproduced. Multi driver

systems should NOT just be blindly discounted as invalid designs. If properly executed, these systems can

provide much greater dynamic range and a larger soundstage than a competing

conventional 2 or 3 way design.

The audible

effects of comb filtering between different radiating sound sources playing the

same program material vs acoustical interference between multiple drivers of

the same sound source are dependent upon loudspeaker driver and cabinet design,

room design, and listener position, distance from the sources and the program material being reproduced. Multi driver

systems should NOT just be blindly discounted as invalid designs. If properly executed, these systems can

provide much greater dynamic range and a larger soundstage than a competing

conventional 2 or 3 way design.

A lot has to do with the distance from the speakers at which one listens and the dispersion characteristics of the speakers. If the listener is close to the speakers—say less than about 3 feet—he will be in what many engineers call the “near field,” meaning the soundfield where the sound that reaches the listener’s ear is primarily direct sound from the speaker with relatively little room reflection.

Moving farther back away from the speakers, the listener will then be in what many refer to as the “critical distance,” which is that distance from the speakers where the sound reaching the listener’s ears is a good mix of direct sound from the speaker and reflected sound from the room’s surfaces.

Farther away from the speakers yet and the listener is in the “reverberant” or “far field,” where the sound reaching the listener’s ears is dominated by room reflections and contains a much smaller percentage of direct sound from the speaker.

Speakers with wide, uniform dispersion (uniform meaning that the designer has chosen the driver sizes, cabinet design, and crossover points such that the speaker’s dispersion remains relatively constant over a wide angle even as the frequency increases) will engage more room reflections than a speaker with narrower dispersion, like a horn speaker that intentionally limits its dispersion to a specified angle.

This very design aspect is a point of greatly differing opinion among well-respected speaker designers. Many designers like speakers to have wide dispersion—but not too wide—so that listeners seated 30 or 45 degrees off axis can still hear the direct first-arrival sound from the speaker clearly. Wide enough to create enough room reflections for a feeling of “spaciousness,” but narrow enough to be focused enough to give listeners a sense of immediacy and sharp imaging.

Allison:One Speaker System

Some famous designers have built speakers—like Roy Allison’s AR-3a, AR-LST and Allison:One— which aimed for extremely wide dispersion and optimal far-field response (sometimes called Power Response), feeling that that was what listeners really heard in a normal listening room, not the so-called first-arrival on-axis anechoic response of the speaker.

Bose 901 Speaker System

Bose has taken this approach to a further extreme, by designing most of its speakers to produce the maximum amount of room reflections possible and limit the direct, localizable sound from the speaker. With that said, we should discuss the loudspeaker power response and its implications.

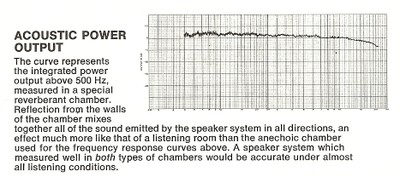

Loudspeaker Power Response

Power Response is the sum of the total radiated acoustic output of a loudspeaker as measured in a sphere around the speaker at several incremental intervals on- and off-axis in the far (reverberant) field. This measurement essentially captures the total sound emitted by a loudspeaker at all frequencies, in all directions, and is therefore thought by proponents of this measurement method to be more representative of how a speaker will sound in an acoustically well-balanced listening environment than by what can be inferred from a simple on-axis anechoic frequency response measurement.

The majority of today’s top speaker designers seem to engineer their speakers to have a very uniform, “correct” (no major peaks or dips) on-axis near-field frequency response, with a minimum of interference-producing protrusions on the baffle and correctly-aligned vertical drivers (M-T above the woofer, not side-by-side). This optimizes the speaker’s response close up—minimal comb filtering and interference between the Mid and Tweeter in the horizontal plane in the crossover overlap region—while preserving the speaker’s inherent far field power response characteristics.

Power Response Measurement

Important Design Considerations

A speaker designed to have proper, correct, uniform response relatively close-up can still have an excellent far-field power response. Let’s say, for purposes of this example, you have a 10” 3-way speaker with a 4 ½” midrange and a 1” dome tweeter. The crossover points are 500 Hz and 2500 Hz. These crossover points use the drivers in frequency ranges where they do not become directional (a 10” woofer is ‘good’ to around 1300 Hz, and a 4 ½” mid is ‘good’ up to around 3kHz), so the speaker has wide dispersion. If the drivers are all in a vertical line, then any serious crossover region interference (or acoustical interference) between the drivers will be limited to the vertical plane, which is less important for listening area coverage (and listener positioning) than the horizontal plane, since listeners can be spread out several feet laterally on the sofa and chairs, but their seated ear positions are likely to be all within a tight 6” or so window vertically.

But that very same speaker with the same drivers and crossover points laying on its side will have a markedly different near-field frequency response when off-axis horizontally, since its drivers are now horizontally-arrayed and all the interference between the drivers is now thrown into the horizontal plane. So, things may be OK at 0 degrees, but at 15, 30, 45, 60, etc degrees off horizontal axis in the near field (the way people sit, from one side of the room to the other), the horizontal speaker will sound quite different from its vertical counterpart, as the radiation patterns from its drivers overlap, canceling and reinforcing each other’s outputs—in the critical horizontal plane.

Good engineers from the major ‘name’ speaker companies know this, which is why virtually every single serious music speaker from good speaker companies have their drivers aligned vertically, so their horizontal nearfield response is smooth and interference-free. Makes no difference whether they’re aluminum mids or beryllium or Kortec tweeters or paper or polypropylene woofers, sealed, ported, transmission line or passive radiator. Good engineers put their drivers in a vertical line to minimize the midrange-treble acoustical interference and interference between drivers in the horizontal plane.

Note that the far-field response of our hypothetical 10” 3-way speaker will be much the same, whether it’s standing up vertically or lying down horizontally (assuming it’s at roughly the same height with respect to the listener’s ears and with respect to the room’s major reflective boundaries). In the far or reverberant field, it’s the speaker’s total acoustic output that counts, and that will be the same, whether it’s mounted vertically and horizontally.

So the takeaway is this: You can design a speaker that performs well and is nicely behaved in the near field without sacrificing its reverberant field behavior. But the reverse is not necessarily true—a speaker that ignores near-field aberrations, driver placement issues which create acoustic interference, baffle/grille protrusion interference issues, etc. to concentrate solely on far-field performance can sound much worse in the near or critical field than one designed to perform well at all distances. The AR-3a—a very famous speaker known for its smooth far-field response—was humorously criticized by a well-known audio critic as having a “cacophony of near-field phase and interference issues”—because of its non-vertically-aligned drivers and obtrusive, interference-producing decorative cabinet molding.

Some 20 years after the 3a’s introduction, AR came out with a vastly-improved model called the AR-78LS, which used very similar drivers (a 12” sealed woofer, and dome midrange and tweeter units like the 3a, but now with ferrorfluid cooling which the 3a’s drivers didn’t have). However, the 78LS’s drivers were all in a neat vertical line and the M-T was a Dual-Dome unit so they acted as one driver with virtually no acoustical interference either horizontally or vertically. Additionally, the baffle/grille was totally free of any acoustic obstructions. Result? Unlike the 3a, the 78LS had superb near-field and far field response. With intelligent design choices and an awareness of basic acoustic information, speakers can perform exactly the way the designer intends, without any surprises.

Vintage Acoustic Research Speakers: AR-3a (left pic) and AR-78LS (right pic)

The “rules” of directionality, dispersion, how much/little a speaker will engage the room by virtue of its dispersion, the degree of audibility of a speaker’s first-arrival sound in the near, critical, and reverberant fields are known bits of information.

A given designer may subscribe to any of the following design philosophies:

- “First arrival—smooth on-axis FR— dominates the listener’s perception!”

- “Far-field power response as a determinant of a speaker’s perceived tonal balance is a discredited, outdated concept!”

- “Wide-dispersion loudspeakers convey a greater sense of ‘air’ and three-dimensionality than narrow-dispersion speakers,” etc.

Regardless, there is no reason the designer can’t achieve exactly what they intend to accomplish.

Conclusion

Both comb filtering (acoustic interaction between widely-spaced multiple sources) and acoustical interference (the acoustic aberrations that emanate from a single source employing multiple drivers) are acoustic artifacts that can impact the nature of reproduced sound as it is perceived by a human listener with two ears. Although the audibility of these phenomena when listening to complex, highly dynamic source material played in a fairly “live” environment while in the critical or far field may be somewhat limited, they are, nonetheless, audible, sometimes detrimentally so. Dealing with potential acoustical interference issues of multiple drivers in the same loudspeaker cabinet is something any serious designer should be concerned about and not just brushed off as a measurement artifact that doesn’t have real world implications. The important thing is for speaker designers to have clearly-defined goals (near- vs. far-field optimization, to what degree they feel wide high frequency dispersion is important, etc.) and to intentionally take the awareness of these phenomena into account when developing their loudspeakers.

Acknowledgements

I would like to personally thank the following people for their contributions and/or peer review of this article, all of whom are true experts in their respective fields. Their contributions enabled us to make the most comprehensive and accurate article possible on the very complex topic of loudspeakers cabinets dealt with herein.

- Paul Apollonio, CEO of Procondev, Inc

- Paul Ceurvels, Design Engineer, Atlantic Technology

- Steve Feinstein, Audio Industry Consultant

- Shane Rich, Technical Director of RBH Sound

- Mark Sanfilipo, Audioholics.com Resident Speaker Expert and Writer

- Dr. Floyd Toole, PHD & Chief Science Officer of Harman